VectorizedMultiAgentSimulator

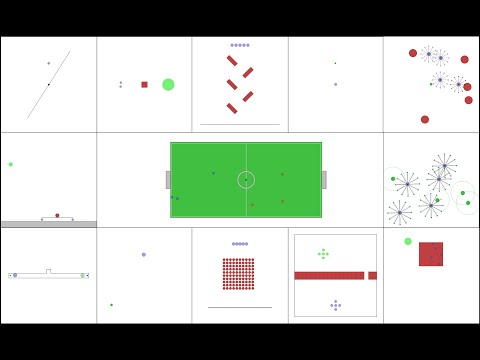

VMAS is a vectorized differentiable simulator designed for efficient Multi-Agent Reinforcement Learning benchmarking. It is comprised of a vectorized 2D physics engine written in PyTorch and a set of challenging multi-robot scenarios. Additional scenarios can be implemented through a simple and modular interface.

Install / Use

/learn @proroklab/VectorizedMultiAgentSimulatorREADME

VectorizedMultiAgentSimulator (VMAS)

<a href="https://pypi.org/project/vmas"><img src="https://img.shields.io/pypi/v/vmas" alt="pypi version"></a>

[!NOTE]

We have released BenchMARL, a benchmarking library where you can train VMAS tasks using TorchRL! Check out how easy it is to use it.

Welcome to VMAS!

This repository contains the code for the Vectorized Multi-Agent Simulator (VMAS).

VMAS is a vectorized differentiable simulator designed for efficient MARL benchmarking. It is comprised of a fully-differentiable vectorized 2D physics engine written in PyTorch and a set of challenging multi-robot scenarios. Scenario creation is made simple and modular to incentivize contributions. VMAS simulates agents and landmarks of different shapes and supports rotations, elastic collisions, joints, and custom gravity. Holonomic motion models are used for the agents to simplify simulation. Custom sensors such as LIDARs are available and the simulator supports inter-agent communication. Vectorization in PyTorch allows VMAS to perform simulations in a batch, seamlessly scaling to tens of thousands of parallel environments on accelerated hardware. VMAS has an interface compatible with OpenAI Gym, with Gymnasium, with RLlib, with torchrl and its MARL training library: BenchMARL, enabling out-of-the-box integration with a wide range of RL algorithms. The implementation is inspired by OpenAI's MPE. Alongside VMAS's scenarios, we port and vectorize all the scenarios in MPE.

Paper

The arXiv paper can be found here.

If you use VMAS in your research, cite it using:

@article{bettini2022vmas,

title = {VMAS: A Vectorized Multi-Agent Simulator for Collective Robot Learning},

author = {Bettini, Matteo and Kortvelesy, Ryan and Blumenkamp, Jan and Prorok, Amanda},

year = {2022},

journal={The 16th International Symposium on Distributed Autonomous Robotic Systems},

publisher={Springer}

}

Video

Watch the presentation video of VMAS, showing its structure, scenarios, and experiments.

<p align="center"> </p> Watch the talk at DARS 2022 about VMAS. <p align="center"> </p> Watch the lecture on creating a custom scenario in VMAS and training it in BenchMARL. <p align="center"> </p>Table of contents

- VectorizedMultiAgentSimulator (VMAS)

How to use

Notebooks

Using a VMAS environment. Here is a simple notebook that you can run to create, step and render any scenario in VMAS. It reproduces the

use_vmas_env.pyscript in theexamplesfolder.Creating a VMAS scenario and training it in BenchMARL. We will create a scenario where multiple robots with different embodiments need to navigate to their goals while avoiding each other (as well as obstacles) and train it using MAPPO and MLP/GNN policies.

Training VMAS in BenchMARL (suggested). In this notebook, we show how to use VMAS in BenchMARL, TorchRL's MARL training library.

Training VMAS in TorchRL. In this notebook, available in the TorchRL docs, we show how to use any VMAS scenario in TorchRL. It will guide you through the full pipeline needed to train agents using MAPPO/IPPO.

Training competitive VMAS MPE in TorchRL. In this notebook, available in the TorchRL docs, we show how to solve a Competitive Multi-Agent Reinforcement Learning (MARL) problem using MADDPG/IDDPG.

Training VMAS in RLlib. In this notebook, we show how to use any VMAS scenario in RLlib. It reproduces the

rllib.pyscript in theexamplesfolder.

Install

To install the simulator, you can use pip to get the latest release:

pip install vmas

If you want to install the current master version (more up to date than latest release), you can do:

git clone https://github.com/proroklab/VectorizedMultiAgentSimulator.git

cd VectorizedMultiAgentSimulator

pip install -e .

By default, vmas has only the core requirements. To install further dependencies to enable training with Gymnasium wrappers, RLLib wrappers, for rendering, and testing, you may want to install these further options:

# install gymnasium for gymnasium wrappers

pip install vmas[gymnasium]

# install rllib for rllib wrapper

pip install vmas[rllib]

# install rendering dependencies

pip install vmas[render]

# install testing dependencies

pip install vmas[test]

# install all dependencies

pip install vmas[all]

You can also install the following training libraries:

pip install benchmarl # For training in BenchMARL

pip install torchrl # For training in TorchRL

pip install "ray[rllib]"==2.1.0 # For training in RLlib. We support versions "ray[rllib]<=2.2,>=1.13"

Run

To use the simulator, simply create an environment by passing the name of the scenario

you want (from the scenarios folder) to the make_env function.

The function arguments are explained in the documentation. The function returns an environment object with the VMAS interface:

Here is an example:

env = vmas.make_env(

scenario="waterfall", # can be scenario name or B

Related Skills

diffs

339.3kUse the diffs tool to produce real, shareable diffs (viewer URL, file artifact, or both) instead of manual edit summaries.

openpencil

1.8kThe world's first open-source AI-native vector design tool and the first to feature concurrent Agent Teams. Design-as-Code. Turn prompts into UI directly on the live canvas. A modern alternative to Pencil.

ui-ux-pro-max-skill

53.4kAn AI SKILL that provide design intelligence for building professional UI/UX multiple platforms

Figma-Context-MCP

14.0kMCP server to provide Figma layout information to AI coding agents like Cursor