SymSpell

SymSpell: 1 million times faster spelling correction & fuzzy search through Symmetric Delete spelling correction algorithm

Install / Use

/learn @wolfgarbe/SymSpellREADME

SymSpell<br>

Spelling correction & Fuzzy search: 1 million times faster through Symmetric Delete spelling correction algorithm

The Symmetric Delete spelling correction algorithm reduces the complexity of edit candidate generation and dictionary lookup for a given Damerau-Levenshtein distance. It is six orders of magnitude faster (than the standard approach with deletes + transposes + replaces + inserts) and language independent.

Opposite to other algorithms only deletes are required, no transposes + replaces + inserts. Transposes + replaces + inserts of the input term are transformed into deletes of the dictionary term. Replaces and inserts are expensive and language dependent: e.g. Chinese has 70,000 Unicode Han characters!

The speed comes from the inexpensive delete-only edit candidate generation and the pre-calculation.<br> An average 5 letter word has about 3 million possible spelling errors within a maximum edit distance of 3,<br> but SymSpell needs to generate only 25 deletes to cover them all, both at pre-calculation and at lookup time. Magic!

If you like SymSpell, try SeekStorm - a sub-millisecond full-text search library & multi-tenancy server in Rust (Open Source).

<br>Copyright (c) 2025 Wolf Garbe

Version: 6.7.3

Author: Wolf Garbe <wolf.garbe@seekstorm.com>

Maintainer: Wolf Garbe <wolf.garbe@seekstorm.com>

URL: https://github.com/wolfgarbe/symspell

Description: https://seekstorm.com/blog/1000x-spelling-correction/

MIT License

Copyright (c) 2025 Wolf Garbe

Permission is hereby granted, free of charge, to any person obtaining a copy of this software and associated

documentation files (the "Software"), to deal in the Software without restriction, including without limitation

the rights to use, copy, modify, merge, publish, distribute, sublicense, and/or sell copies of the Software,

and to permit persons to whom the Software is furnished to do so, subject to the following conditions:

The above copyright notice and this permission notice shall be included in all

copies or substantial portions of the Software.

https://opensource.org/licenses/MIT

Single word spelling correction

Lookup provides a very fast spelling correction of single words.

- A Verbosity parameter allows to control the number of returned results:<br> Top: Top suggestion with the highest term frequency of the suggestions of smallest edit distance found.<br> Closest: All suggestions of smallest edit distance found, suggestions ordered by term frequency.<br> All: All suggestions within maxEditDistance, suggestions ordered by edit distance, then by term frequency.

- The Maximum edit distance parameter controls up to which edit distance words from the dictionary should be treated as suggestions.

- The required Word frequency dictionary can either be directly loaded from text files (LoadDictionary) or generated from a large text corpus (CreateDictionary).

Applications

- Spelling correction,

- Query correction (10–15% of queries contain misspelled terms),

- Chatbots,

- OCR post-processing,

- Automated proofreading.

- Fuzzy search & approximate string matching

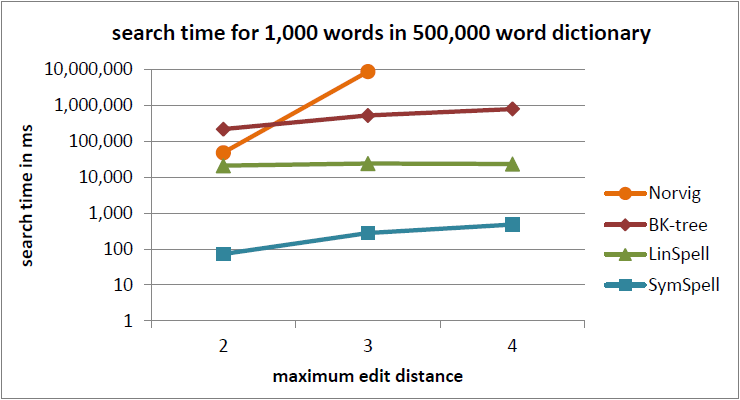

Performance (single term)

0.033 milliseconds/word (edit distance 2) and 0.180 milliseconds/word (edit distance 3) (single core on 2012 Macbook Pro)<br>

<br><br>

1,870 times faster than BK-tree (see Benchmark 1: dictionary size=500,000, maximum edit distance=3, query terms with random edit distance = 0...maximum edit distance, verbose=0)<br><br>

1 million times faster than Norvig's algorithm (see Benchmark 2: dictionary size=29,157, maximum edit distance=3, query terms with fixed edit distance = maximum edit distance, verbose=0)<br>

<br><br>

1,870 times faster than BK-tree (see Benchmark 1: dictionary size=500,000, maximum edit distance=3, query terms with random edit distance = 0...maximum edit distance, verbose=0)<br><br>

1 million times faster than Norvig's algorithm (see Benchmark 2: dictionary size=29,157, maximum edit distance=3, query terms with fixed edit distance = maximum edit distance, verbose=0)<br>

Blog Posts: Algorithm, Benchmarks, Applications

1000x Faster Spelling Correction algorithm<br> Fast approximate string matching with large edit distances in Big Data<br> Very fast Data cleaning of product names, company names & street names<br> Sub-millisecond compound aware automatic spelling correction<br> SymSpell vs. BK-tree: 100x faster fuzzy string search & spell checking<br> Fast Word Segmentation for noisy text<br> The Pruning Radix Trie — a Radix trie on steroids<br>

Compound aware multi-word spelling correction

LookupCompound supports compound aware automatic spelling correction of multi-word input strings.

1. Compound splitting & decompounding

Lookup() assumes every input string as single term. LookupCompound also supports compound splitting / decompounding with three cases:

- mistakenly inserted space within a correct word led to two incorrect terms

- mistakenly omitted space between two correct words led to one incorrect combined term

- multiple input terms with/without spelling errors

Splitting errors, concatenation errors, substitution errors, transposition errors, deletion errors and insertion errors can by mixed within the same word.

2. Automatic spelling correction

- Large document collections make manual correction infeasible and require unsupervised, fully-automatic spelling correction.

- In conventional spelling correction of a single token, the user is presented with multiple spelling correction suggestions. <br>For automatic spelling correction of long multi-word text the algorithm itself has to make an educated choice.

Examples:

- whereis th elove hehad dated forImuch of thepast who couqdn'tread in sixthgrade and ins pired him

+ where is the love he had dated for much of the past who couldn't read in sixth grade and inspired him (9 edits)

- in te dhird qarter oflast jear he hadlearned ofca sekretplan

+ in the third quarter of last year he had learned of a secret plan (9 edits)

- the bigjest playrs in te strogsommer film slatew ith plety of funn

+ the biggest players in the strong summer film slate with plenty of fun (9 edits)

- Can yu readthis messa ge despite thehorible sppelingmsitakes

+ can you read this message despite the horrible spelling mistakes (9 edits)

Performance (compounds)

0.2 milliseconds / word (edit distance 2) 5000 words / second (single core on 2012 Macbook Pro)

Word Segmentation of noisy text

WordSegmentation divides a string into words by inserting missing spaces at appropriate positions.<br>

- Misspelled words are corrected and do not prevent segmentation.<br>

- Existing spaces are allowed and considered for optimum segmentation.<br>

- SymSpell.WordSegmentation uses a Triangular Matrix approach instead of the conventional Dynamic Programming: It uses an array instead of a dictionary for memoization, loops instead of recursion and incrementally optimizes prefix strings instead of remainder strings.<br>

- The Triangular Matrix approach is faster than the Dynamic Programming approach. It has a lower memory consumption, better scaling (constant O(1) memory consumption vs. linear O(n)) and is GC friendly.

- While each string of length n can be segmented into 2^n−1 possible compositions,<br> SymSpell.WordSegmentation has a linear runtime O(n) to find the optimum composition.

Examples:

- thequickbrownfoxjumpsoverthelazydog

+ the quick brown fox jumps over the lazy dog

- itwasabrightcolddayinaprilandtheclockswerestrikingthirteen

+ it was a bright cold day in april and the clocks were striking thirteen

- itwasthebestoftimesitwastheworstoftimesitwastheageofwisdomitwastheageoffoolishness

+ it was the best of times it was the worst of times it was the age of wisdom it was the age of foolishness

Applications:

- Word Segmentation for CJK languages for Indexing Spelling correction, Machine translation, Language understanding, Sentiment analysis

- Normalizing English compound nouns for search & indexing (e.g. ice box = ice-box = icebox; pig sty = pig-sty = pigsty)

- Word segmentation for compounds if both original word and split word parts should be indexed.

- Correction of missing spaces caused by Typing errors.

- Correction of Conversion errors: spaces between word may get lost e.g. when removing line breaks.

- Correction of OCR errors: inferior quality of original documents or handwritten text may prevent that all spaces are recognized.

- Correction of Transmission errors: during the transmission over noisy channels spaces can get lost or spelling errors introduced.

- Keyword extraction from URL addresses, domain names, #hashtags, table column descriptions or programming variables written without spaces.

- For password analysis, the extraction of terms from passwords can be required.

- For Speech recognition, if spaces between words are not properly recognized in spoken language.

- Automatic CamelCasing of programming variables.

- Applications beyond N

Related Skills

node-connect

336.9kDiagnose OpenClaw node connection and pairing failures for Android, iOS, and macOS companion apps

frontend-design

83.0kCreate distinctive, production-grade frontend interfaces with high design quality. Use this skill when the user asks to build web components, pages, or applications. Generates creative, polished code that avoids generic AI aesthetics.

openai-whisper-api

336.9kTranscribe audio via OpenAI Audio Transcriptions API (Whisper).

commit-push-pr

83.0kCommit, push, and open a PR