ThymeBoost

Forecasting with Gradient Boosted Time Series Decomposition

Install / Use

/learn @tblume1992/ThymeBoostREADME

ThymeBoost v0.1.16

Changes with 1.16:

New Trend Estimators: 'lbf' and 'decision_tree'. 'lbf' stands for linear basis functions and will fit a linear changepoint method with a similar vibe to that of Prophet. When this is boosted it gets much more smooth.

ThymeBoost combines time series decomposition with gradient boosting to provide a flexible mix-and-match time series framework for forecasting. At the most granular level are the trend/level (going forward this is just referred to as 'trend') models, seasonal models, and endogenous models. These are used to approximate the respective components at each 'boosting round' and sequential rounds are fit on residuals in usual boosting fashion.

Documentation is under construction at : https://thymeboost.readthedocs.io/en/latest/

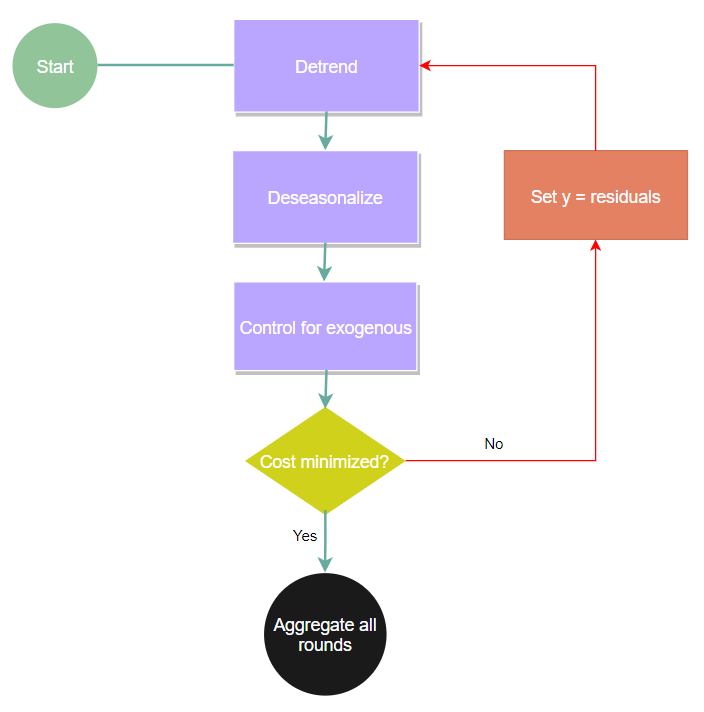

Basic flow of the algorithm:

Quick Start.

pip install ThymeBoost

Some basic examples:

Starting with a very simple example of a simple trend + seasonality + noise

import numpy as np

import matplotlib.pyplot as plt

import seaborn as sns

from ThymeBoost import ThymeBoost as tb

sns.set_style('darkgrid')

#Here we will just create a random series with seasonality and a slight trend

seasonality = ((np.cos(np.arange(1, 101))*10 + 50))

np.random.seed(100)

true = np.linspace(-1, 1, 100)

noise = np.random.normal(0, 1, 100)

y = true + noise + seasonality

plt.plot(y)

plt.show()

First we will build the ThymeBoost model object:

boosted_model = tb.ThymeBoost(approximate_splits=True,

n_split_proposals=25,

verbose=1,

cost_penalty=.001)

The arguments passed here are also the defaults. Most importantly, we pass whether we want to use 'approximate splits' and how many splits to propose. If we pass approximate_splits=False then ThymeBoost will exhaustively try every data point to split on if we look for changepoints. If we don't care about changepoints then this is ignored.

ThymeBoost uses a standard fit => predict procedure. Let's use the fit method where everything passed is converted to a itertools cycle object in ThymeBoost, this will be referred as 'generator' parameters moving forward. This might not make sense yet but is shown further in the examples!

output = boosted_model.fit(y,

trend_estimator='linear',

seasonal_estimator='fourier',

seasonal_period=25,

split_cost='mse',

global_cost='maicc',

fit_type='global')

We pass the input time_series and the parameters used to fit. For ThymeBoost the more specific parameters are the different cost functions controlling for each split and the global cost function which controls how many boosting rounds to do. Additionally, the fit_type='global' designates that we are NOT looking for changepoints and just fits our trend_estimator globally.

With verbose ThymeBoost will print out some relevant information for us.

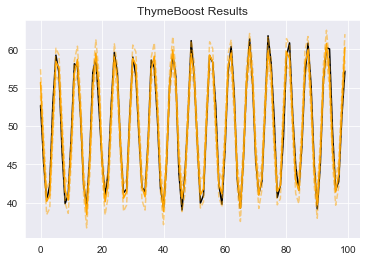

Now that we have fitted our series we can take a look at our results

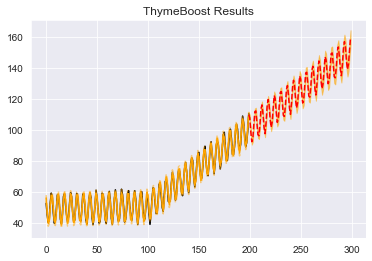

boosted_model.plot_results(output)

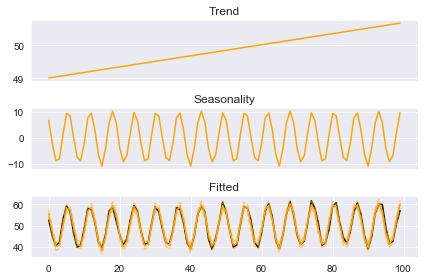

The fit looks correct enough, but let's take a look at the indiviudal components we fitted.

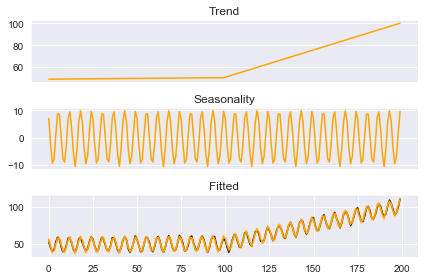

boosted_model.plot_components(output)

Alright, the decomposition looks reasonable as well but let's complicate the task by now adding a changepoint.

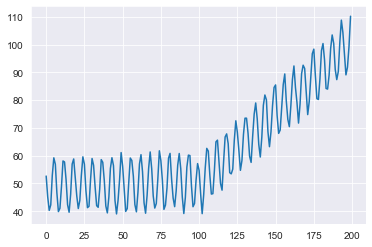

Adding a changepoint

true = np.linspace(1, 50, 100)

noise = np.random.normal(0, 1, 100)

y = np.append(y, true + noise + seasonality)

plt.plot(y)

plt.show()

In order to fit this we will change fit_type='global' to fit_type='local'. Let's see what happens.

boosted_model = tb.ThymeBoost(

approximate_splits=True,

n_split_proposals=25,

verbose=1,

cost_penalty=.001,

)

output = boosted_model.fit(y,

trend_estimator='linear',

seasonal_estimator='fourier',

seasonal_period=25,

split_cost='mse',

global_cost='maicc',

fit_type='local')

predicted_output = boosted_model.predict(output, 100)

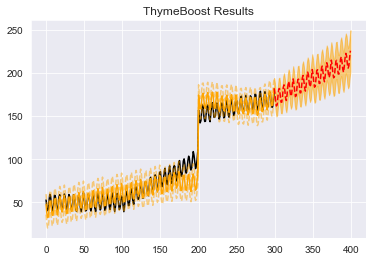

Here we add in the predict method which takes in the fitted results as well as the forecast horizon. You will notice that the print out now states we are fitting locally and we do an additional round of boosting. Let's plot the results and see if the new round was ThymeBoost picking up the changepoint.

boosted_model.plot_results(output, predicted_output)

Ok, cool. Looks like it worked about as expected here, we did do 1 wasted round where ThymeBoost just did a slight adjustment at split 80 but that can be fixed as you will see!

Once again looking at the components:

boosted_model.plot_components(output)

There is a kink in the trend right around 100 as to be expected.

Let's further complicate this series.

Adding a large jump

#Pretty complicated model

true = np.linspace(1, 20, 100) + 100

noise = np.random.normal(0, 1, 100)

y = np.append(y, true + noise + seasonality)

plt.plot(y)

plt.show()

So here we have 3 distinct trend lines and one large shift upward. Overall, pretty nasty and automatically fitting this with any model (including ThymeBoost) can have extremely wonky results.

But...let's try anyway. Here we will utilize the 'generator' variables. As mentioned before, everything passed in to the fit method is a generator variable. This basically means that we can pass a list for a parameter and that list will be cycled through at each boosting round. So if we pass this: trend_estimator=['mean', 'linear'] after the initial trend estimation using the median we then use mean followed by linear then mean and linear until boosting is terminated. We can also use this to approximate a potential complex seasonality just by passing a list of what the complex seasonality can be. Let's fit with these generator variables and pay close attention to the print out as it will show you what ThymeBoost is doing at each round.

boosted_model = tb.ThymeBoost(

approximate_splits=True,

verbose=1,

cost_penalty=.001,

)

output = boosted_model.fit(y,

trend_estimator=['mean'] + ['linear']*20,

seasonal_estimator='fourier',

seasonal_period=[25, 0],

split_cost='mae',

global_cost='maicc',

fit_type='local',

connectivity_constraint=True,

)

predicted_output = boosted_model.predict(output, 100)

The log tells us what we need to know:

********** Round 1 **********

Using Split: None

Fitting initial trend globally with trend model:

median()

seasonal model:

fourier(10, False)

cost: 2406.7734967780552

********** Round 2 **********

Using Split: 200

Fitting local with trend model:

mean()

seasonal model:

None

cost: 1613.03414289753

********** Round 3 **********

Using Split: 174

Fitting local with trend model:

linear((1, None))

seasonal model:

fourier(10, False)

cost: 1392.923553270366

********** Round 4 **********

Using Split: 274

Fitting local with trend model:

linear((1, None))

seasonal model:

None

cost: 1384.306737800115

==============================

Boosting Terminated

Using round 4

The initial round for trend is always the same (this idea is pretty core to the boosting framework) but after that we fit with mean and the next 2 rounds are fit with linear estimation. The complex seasonality works 100% as we expect, just going back and forth between the 2 periods we give it where a 0 period means no seasonality estimation occurs.

Let's take a look at the results:

boosted_model.plot_results(output, predicted_output)

Hmmm, that looks very wonky.

But since we used a mean estimator we are saying that there is a change in the overall level of the series. That's not exactly true, by appending that last series with just another trend line we essentially changed the slope and the intercept of the series.

To account for this, let's relax connectivity constraints and just try linear estimators. Once again, EVERYTHING passed to the fit method is a generator variable so we will relax the connectivity constraint for the first linear fit to hopefully account