Katana

A next-generation crawling and spidering framework.

Install / Use

/learn @projectdiscovery/KatanaREADME

Features

- Fast And fully configurable web crawling

- Standard and Headless mode

- JavaScript parsing / crawling

- Customizable automatic form filling

- Scope control - Preconfigured field / Regex

- Customizable output - Preconfigured fields

- INPUT - STDIN, URL and LIST

- OUTPUT - STDOUT, FILE and JSON

Installation

katana requires Go 1.25+ to install successfully. If you encounter any installation issues, we recommend trying with the latest available version of Go, as the minimum required version may have changed. Run the command below or download a pre-compiled binary from the release page.

CGO_ENABLED=1 go install github.com/projectdiscovery/katana/cmd/katana@latest

More options to install / run katana-

<details> <summary>Docker</summary>To install / update docker to latest tag -

docker pull projectdiscovery/katana:latest

To run katana in standard mode using docker -

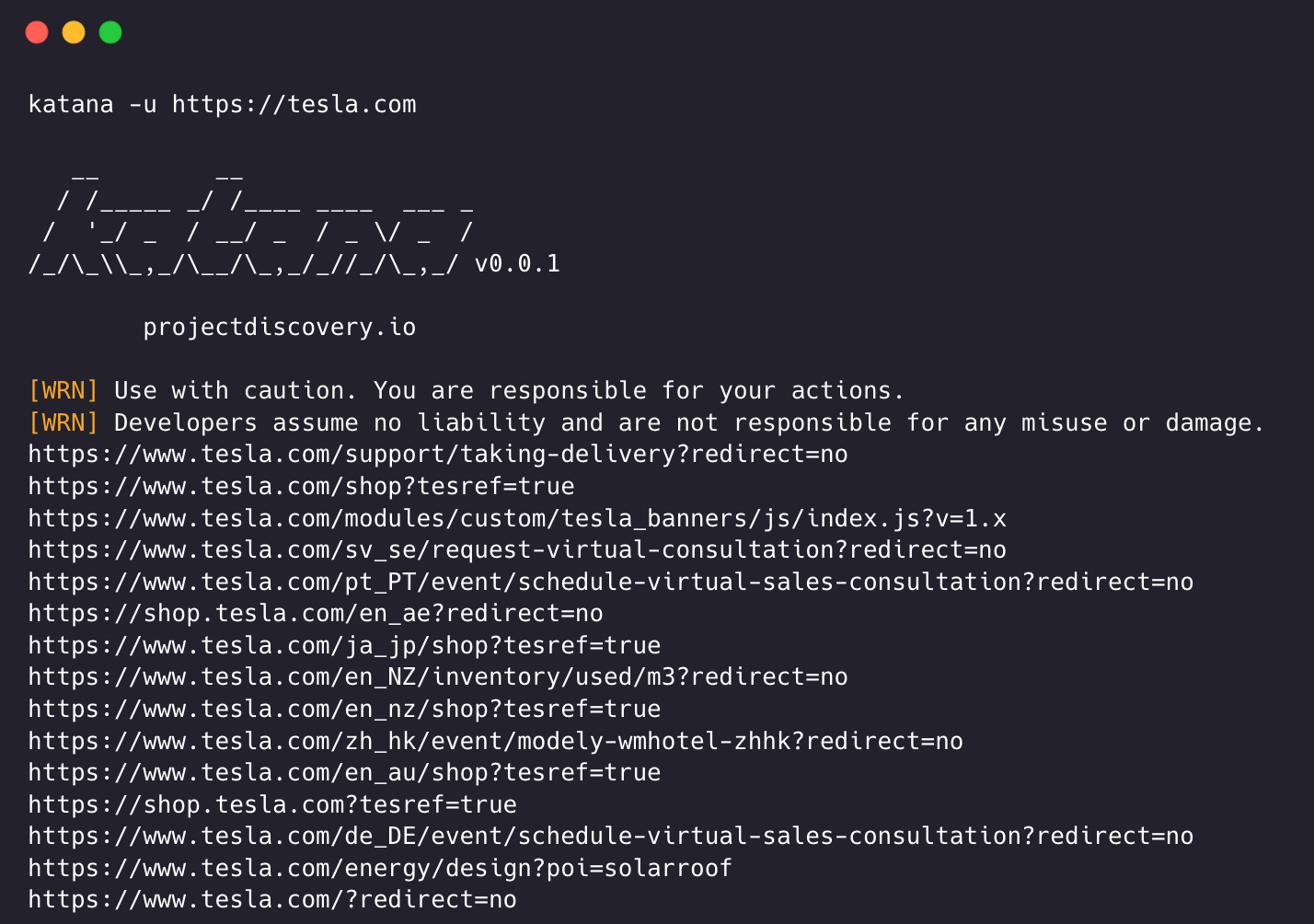

docker run projectdiscovery/katana:latest -u https://tesla.com

To run katana in headless mode using docker -

docker run projectdiscovery/katana:latest -u https://tesla.com -system-chrome -headless

It's recommended to install the following prerequisites -

sudo apt update

sudo snap refresh

sudo apt install zip curl wget git

sudo snap install golang --classic

wget -q -O - https://dl-ssl.google.com/linux/linux_signing_key.pub | sudo apt-key add -

sudo sh -c 'echo "deb http://dl.google.com/linux/chrome/deb/ stable main" >> /etc/apt/sources.list.d/google.list'

sudo apt update

sudo apt install google-chrome-stable

install katana -

go install github.com/projectdiscovery/katana/cmd/katana@latest

Usage

katana -h

This will display help for the tool. Here are all the switches it supports.

Katana is a fast crawler focused on execution in automation

pipelines offering both headless and non-headless crawling.

Usage:

./katana [flags]

Flags:

INPUT:

-u, -list string[] target url / list to crawl

-resume string resume scan using resume.cfg

-e, -exclude string[] exclude host matching specified filter ('cdn', 'private-ips', cidr, ip, regex)

CONFIGURATION:

-r, -resolvers string[] list of custom resolver (file or comma separated)

-d, -depth int maximum depth to crawl (default 3)

-jc, -js-crawl enable endpoint parsing / crawling in javascript file

-jsl, -jsluice enable jsluice parsing in javascript file (memory intensive)

-ct, -crawl-duration value maximum duration to crawl the target for (s, m, h, d) (default s)

-kf, -known-files string enable crawling of known files (all,robotstxt,sitemapxml), a minimum depth of 3 is required to ensure all known files are properly crawled.

-mrs, -max-response-size int maximum response size to read (default 4194304)

-timeout int time to wait for request in seconds (default 10)

-aff, -automatic-form-fill enable automatic form filling (experimental)

-fx, -form-extraction extract form, input, textarea & select elements in jsonl output

-retry int number of times to retry the request (default 1)

-proxy string http/socks5 proxy to use

-td, -tech-detect enable technology detection

-H, -headers string[] custom header/cookie to include in all http request in header:value format (file)

-config string path to the katana configuration file

-fc, -form-config string path to custom form configuration file

-flc, -field-config string path to custom field configuration file

-s, -strategy string Visit strategy (depth-first, breadth-first) (default "depth-first")

-iqp, -ignore-query-params Ignore crawling same path with different query-param values

-fsu, -filter-similar filter crawling of similar looking URLs (e.g., /users/123 and /users/456)

-fst, -filter-similar-threshold int number of distinct values before a path position is treated as parameter (default 10)

-tlsi, -tls-impersonate enable experimental client hello (ja3) tls randomization

-dr, -disable-redirects disable following redirects (default false)

-kb, -knowledge-base enable knowledge base classification

DEBUG:

-health-check, -hc run diagnostic check up

-elog, -error-log string file to write sent requests error log

-pprof-server enable pprof server

HEADLESS:

-hl, -headless enable headless hybrid crawling (experimental)

-sc, -system-chrome use local installed chrome browser instead of katana installed

-sb, -show-browser show the browser on the screen with headless mode

-ho, -headless-options string[] start headless chrome with additional options

-nos, -no-sandbox start headless chrome in --no-sandbox mode

-cdd, -chrome-data-dir string path to store chrome browser data

-scp, -system-chrome-path string use specified chrome browser for headless crawling

-noi, -no-incognito start headless chrome without incognito mode

-cwu, -chrome-ws-url string use chrome browser instance launched elsewhere with the debugger listening at this URL

-xhr, -xhr-extraction extract xhr request url,method in jsonl output

-pls, -page-load-strategy string page load strategy (heuristic, load, domcontentloaded, networkidle, none) (default "heuristic")

-dwt, -dom-wait-time int time in seconds to wait after page load when using domcontentloaded strategy (default 5)

-csp, -captcha-solver-provider string captcha solver provider (e.g. capsolver)

-csk, -captcha-solver-key string captcha solver provider api key

SCOPE:

-cs, -crawl-scope string[] in scope url regex to be followed by crawler

-cos, -crawl-out-scope string[] out of scope url regex to be excluded by crawler

-fs, -field-scope string pre-defined scope field (dn,rdn,fqdn) or custom regex (e.g., '(company-staging.io|company.com)') (default "rdn")

-ns, -no-scope disables host based default scope

-do, -display-out-scope display external endpoint from scoped crawling

FILTER:

-mr, -match-regex string[] regex or list of regex to match on output url (cli, file)

-fr, -filter-regex string[] regex or list of regex to filter on output url (cli, file)

-f, -field string field to display in output (url,path,fqdn,rdn,rurl,qurl,qpath,file,ufile,key,value,kv,dir,udir) (Deprecated: use -output-template instead)

-sf, -store-field string field to store in per-host output (url,path,fqdn,rdn,rurl,qurl,qpath,file,ufile,key,value,kv,dir,udir)

-em, -extension-match string[] match output for given extension (eg, -em php,html,js,none)

-ef, -extension-filter string[] filter output for given extension (eg, -ef png,css)

-ndef, -no-default-ext-filter bool remove default extensions from the filter list

-mdc, -match-condition string match response with dsl based condition

-fdc, -filter-condition string filter response with dsl based condition

-duf, -disable-unique-filter disable duplicate content filtering

-fpt, -filter-page-type string[] filter response with page type (e.g. error,captcha,parked)

RATE-LIMIT:

-c, -concurrency int number of concurrent fetchers to use (default 10)

-p, -parallelism int number of concurrent inputs to process (default 10)

-rd, -delay int request delay between each request in seconds

-rl, -rate-limit int maximum requests to send per second (default 150)

-rlm, -rate-limit-minute int maximum number of requests to send per minute

UPDATE:

-up, -update update katana to latest version

-duc, -disable-update-check disable automatic katana update check

OUTPUT:

-o, -output string file to write output to

-ot, -output-template string custom output template

-sr, -store-response store http requests/responses

-srd, -store-response-dir string store http requests/responses to custom directory

-ncb, -no-clobber do not overwrite output file

-sfd, -store-fi

Related Skills

node-connect

347.2kDiagnose OpenClaw node connection and pairing failures for Android, iOS, and macOS companion apps

frontend-design

108.0kCreate distinctive, production-grade frontend interfaces with high design quality. Use this skill when the user asks to build web components, pages, or applications. Generates creative, polished code that avoids generic AI aesthetics.

openai-whisper-api

347.2kTranscribe audio via OpenAI Audio Transcriptions API (Whisper).

qqbot-media

347.2kQQBot 富媒体收发能力。使用 <qqmedia> 标签,系统根据文件扩展名自动识别类型(图片/语音/视频/文件)。