Schedoscope

Schedoscope is a scheduling framework for painfree agile development, testing, (re)loading, and monitoring of your datahub, lake, or whatever you choose to call your Hadoop data warehouse these days.

Install / Use

/learn @ottogroup/SchedoscopeREADME

Schedoscope is no longer under development by OttoGroup. Feel free to fork!

Introduction

Schedoscope is a scheduling framework for painfree agile development, testing, (re)loading, and monitoring of your datahub, datalake, or whatever you choose to call your Hadoop data warehouse these days.

Schedoscope makes the headache go away you are certainly going to get when having to frequently rollout and retroactively apply changes to computation logic and data structures in your datahub with traditional ETL job schedulers such as Oozie.

With Schedoscope,

- you never have to create DDL and schema migration scripts;

- you do not have to manually determine which data must be deleted and recomputed in face of retroactive changes to logic or data structures;

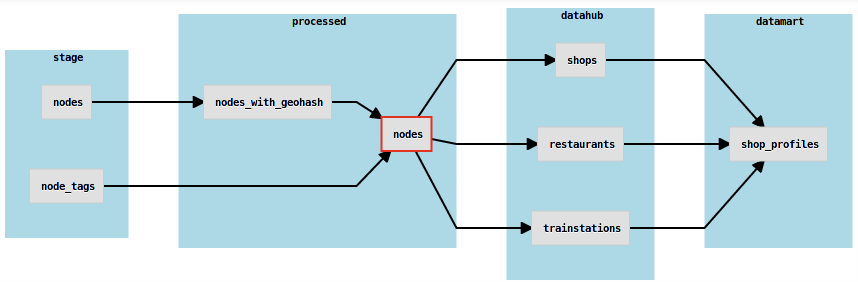

- you specify Hive table structures (called "views"), partitioning schemes, storage formats, dependent views, as well as transformation logic in a concise Scala DSL;

- you have a wide range of options for expressing data transformations - from file operations and MapReduce jobs to Pig scripts, Hive queries, Spark jobs, and Oozie workflows;

- you benefit from Scala's static type system and your IDE's code completion to make less typos that hit you late during deployment or runtime;

- you can easily write unit tests for your transformation logic in ScalaTest and run them quickly right out of your IDE;

- you schedule jobs by expressing the views you need - Schedoscope takes care that all required dependencies - and only those- are computed as well;

- you can easily export view data in parallel to external systems such as Redis caches, JDBC, or Kafka topics;

- you have Metascope - a nice metadata management and data lineage tracing tool - at your disposal;

- you achieve a higher utilization of your YARN cluster's resources because job launchers are not YARN applications themselves that consume cluster capacitity.

Getting Started

Get a glance at

Build it:

[~]$ git clone https://github.com/ottogroup/schedoscope.git

[~]$ cd schedoscope

[~/schedoscope]$ MAVEN_OPTS='-XX:MaxPermSize=512m -Xmx1G' mvn clean install

Follow the Open Street Map tutorial to install and run Schedoscope in a standard Hadoop distribution image:

Take a look at the View DSL Primer to get more information about the capabilities of the Schedoscope DSL:

Read more about how Schedoscope actually performs its scheduling work:

More documentation can be found here:

Check out Metascope! It's an add-on to Schedoscope for collaborative metadata management, data discovery, exploration, and data lineage tracing:

When is Schedoscope not for you?

Schedoscope is based on the following assumptions:

- data are largely relational and meaningfully representable as Hive tables;

- there is enough cluster time and capacity to actually allow for retroactive recomputation of data;

- it is acceptable to compile table structures, dependencies, and transformation logic into what is effectively a project-specific scheduler;

- it is acceptable that your scheduler creates and possibly drops Hive tables and databases it manages.

Should any of those assumptions not hold in your context, you should probably look for a different scheduler.

Origins

Schedoscope was conceived at the Business Intelligence department of Otto Group

Contributions

The following people have contributed to the various parts of Schedoscope so far:

Utz Westermann (maintainer), Kassem Tohme, Alexander Kolb, Christian Richter, Jan Hicken, Diogo Aurelio, Hans-Peter Zorn, Dominik Benz, Annika Seidler, Martin Sänger, Julian Keppel.

We would love to get contributions from you as well. We haven't got a formalized submission process yet. If you have an idea for a contribution or even coded one already, get in touch with Utz or just send us your pull request. We will work it out from there.

Please help making Schedoscope better!

News

05/08/2018 - Release 0.10.2

We have released Version 0.10.2 as a Maven artifact to our Bintray repository (see Setting Up A Schedoscope Project for an example pom).

We have changed the materialization logic of materializeOnce views such that they no longer ask their child views to materialize if the materializeOnce views have been materialized already. This improves performance.

04/24/2018 - Release 0.10.1

We have released Version 0.10.1 as a Maven artifact to our Bintray repository (see Setting Up A Schedoscope Project for an example pom).

This is a bugfix release correcting the order of the TBLPROPERTIES and LOCATION clauses in the Hive DDL generated for views. Please do note that if you use the tblProperties clause in some views, this change affects the DDL checksum making Schedoscope drop and recreate the respective tables. Hence the version bump to 0.10.1.

Thanks to Julian Keppel for reporting the issue and providing the fix.

04/06/2018 - Release 0.9.13

We have released Version 0.9.13 as a Maven artifact to our Bintray repository (see Setting Up A Schedoscope Project for an example pom).

Removed derelict indirect CDH5.12.0 dependencies incurred by Cloudera's Spark 2.2.0-Cloudera2 dependency.

03/15/2018 - Release 0.9.11

We have released Version 0.9.11 as a Maven artifact to our Bintray repository (see Setting Up A Schedoscope Project for an example pom).

Added configuration parameter schedoscope.export.disableAll to globally disable all view exports. Useful in test environments.

03/09/2018 - Release 0.9.10

We have released Version 0.9.10 as a Maven artifact to our Bintray repository (see Setting Up A Schedoscope Project for an example pom).

Upgraded Cloudera dependencies to CDH 5.14.0.

01/25/2018 - Release 0.9.9

We have released Version 0.9.9 as a Maven artifact to our Bintray repository (see Setting Up A Schedoscope Project for an example pom).

Export your views to Google Cloud Platform's BigQuery via a simple exportAs() statement.

BigQuery export now compresses view data before sending it off to Google Cloud Storage.

01/24/2018 - Release 0.9.7

We have released Version 0.9.7 as a Maven artifact to our Bintray repository (see Setting Up A Schedoscope Project for an example pom).

Optimized performance of BigQuery export by moving more work to the map phase of the export job.

01/23/2018 - Release 0.9.6

We have released Version 0.9.6 as a Maven artifact to our Bintray repository (see Setting Up A Schedoscope Project for an example pom).

Corrected a problem with command line argument construction within BigQuery exportAs() clauses in a Kerberized cluster.

01/22/2018 - Release 0.9.5

We have released Version 0.9.5 as a Maven artifact to our Bintray repository (see Setting Up A Schedoscope Project for an example pom).

Export your views to Google Cloud Platform's BigQuery via a simple exportAs() statement.

10/12/2017 - Release 0.9.4

We have released Version 0.9.4 as a Maven artifact to our Bintray repository (see Setting Up A Schedoscope Project for an example pom).

Emergency bug fix for Schedoscope crashing upon exports. Do not use 0.9.3!

10/11/2017 - Release 0.9.3

We have released Version 0.9.3 as a Maven artifact to our Bintray repository (see Setting Up A Schedoscope Project for an example pom).

Minor bug fix. Show view name in resource manager also for transformations of views that have exportAs statements.

09/21/2017 - Release 0.9.2

We have released Version 0.9.2 as a Maven artifact to our Bintray repository (see Setting Up A Schedoscope Project for an example pom)