Machisplin

An R package for interpolation of noisy multi-variate data through comprehensive statistical analyses using thin-plate-smoothing splines and machine learning ensembling.

Install / Use

/learn @jasonleebrown/MachisplinREADME

machisplin 2.0

<p> September 19, 2025</p> <p> I am pleased to announce that MACHISPLIN 2.0 has been released! </p> <p> Main features: </p> <p> -updated to work in the Terra infrastructure (rgeos, raster, dismo, rgdal... all have been retired) </p> <p> -fixed several bugs associated with thin-plate splines </p> <p>Known bugs: </p> <p>- Multicore support is not currently functional. I am debating abandoning this entirely, as I primarily use it for single- or dual-core analyses due to the massive RAM requirements for large projects. </p>machisplin

An R package for interpolation of noisy multivariate data through comprehensive statistical analyses using thin-plate-smoothing splines and machine learning ensembling. This package is a free open-source machine learning analog to the expensive ANUSPLIN software.

To install, open R and type the following:

install.packages("devtools")

library(devtools)

install_github("jasonleebrown/machisplin")

Contact

We can help you sort out issues, maybe

Input Data formats

NOTE: All data must be in World Geodetic System 1984 (WGS 84). Several of the functions assume this and will not work if in another projection. I may expand this to other projections/datums in the future based on others' needs and my free time. To explore the data format for input data, see:

library(raster)

library(MACHISPLIN)

## data format that will be interpolated, each column is a different dataset

Mydata<-sampling

# rasters to use as high-resolution covariates for downscaling

ALT = rast(system.file("extdata", "alt.tif", package="MACHISPLIN"))

SLOPE = rast(system.file("extdata", "slope.tif", package="MACHISPLIN"))

TWI = rast(system.file("extdata", "TWI.tif", package="MACHISPLIN"))

If needed, see guide below to convert raster GIS data for use as in 'MACHISPLIN'

What does it do?

This R package interpolates noisy multivariate data through machine learning* ensembling of up to six algorithms: boosted regression trees (BRT), neural networks (NN); generalized additive model (GAM), multivariate adaptive regression splines (MARS), support vector machines (SVM), and random forests (RF). The machisplin.mltps function simultaneously evaluates different combinations of the six algorithms to predict the input data. During model tuning, each algorithm is systematically weighted from 0-1, and the fit of the ensembled model is evaluated. The best performing model is determined through k-fold cross-validation (k=10), and the model that has the lowest residual sum of squares of test data is chosen. After determining the best model algorithms and weights, a final model is created using the full training dataset. Residuals of the final model are calculated from the full training dataset and these values are interpolated using thin-plate-smoothing splines. This creates a continuous error surface and is used to correct most the residual error in the final ensemble model.

This package is a free open-source machine learning analog to the expensive ANUSPLIN software. To output final R2 values, model weights, algorithm(s) used, and rasters for use in GIS; use the machisplin.write.geotiff function. To output residuals, use machisplin.write.residuals and to output model loadings use machispline.write.loadings. *just to clarify, GAMs are not a machine learning method

Overview of process

Details of modeling using higher resolution covariates

Example of the results (all with R2>0.99).

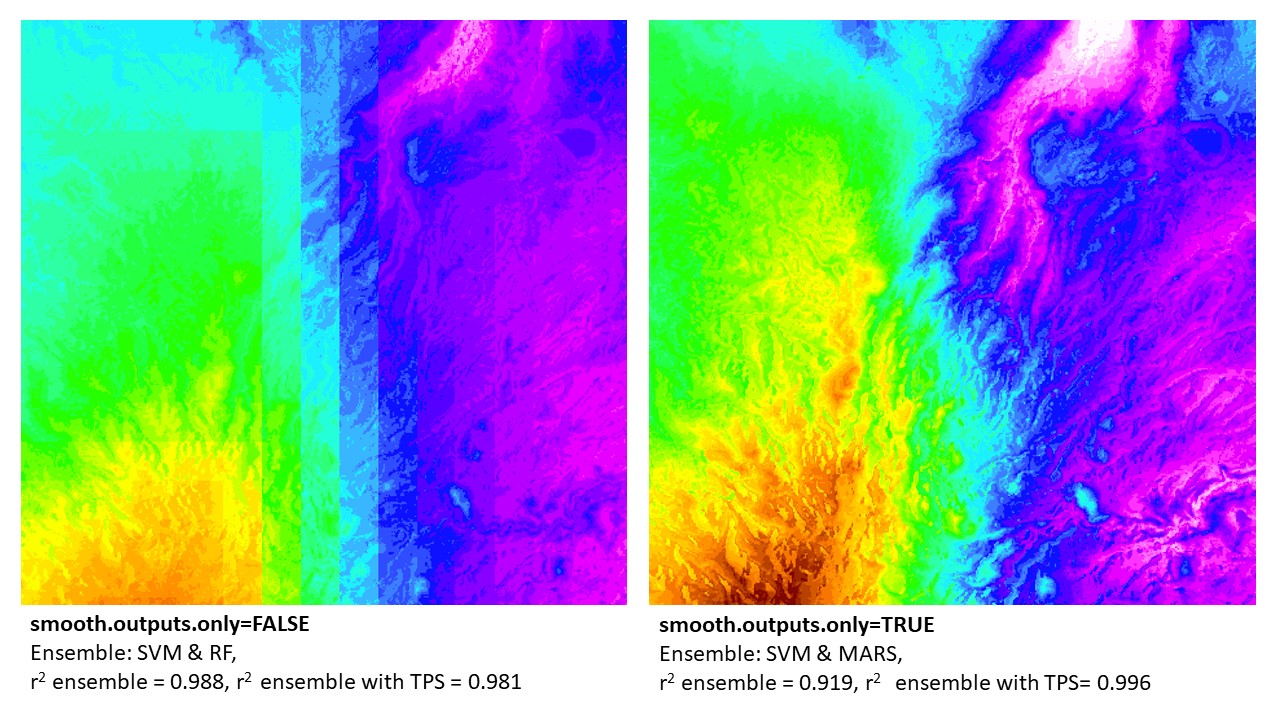

Update: New Parameter (1/15/2021): smooth.outputs.only

I recently added a new parameter 'smooth.outputs.only' to the machisplin.mltps function to address an issue with occasionally blocky results. If 'smooth.outputs.only=TRUE', this removes Boosted Regressive Trees and Random Forests from the modeling algorithms. Both algorithms occasionally produce blocky outputs (see example below). I recommend first using 'smooth.outputs.only=FALSE' for the initial analyses. If you are unhappy with the visual appearance of the created layers, consider 'smooth.outputs.only=TRUE'. Be aware if results are blocky and you exclude these two algorithms, then the predictive performance might decline (as before exclusion, one or both contributed to the best model).

My biggest concern with blocky interpolated surfaces, in addition to looking bad, is that the blockiness itself might be the result of overfitting to noise in the training datasets. In most cases, I think smooth, continuous surfaces best characterize most spatial processes. Thus, smoother results might better represent the truth, despite slightly lower performance values.

BIO13 downscaled in northern Madagascar: example of 'smooth.outputs.only' parameter. Note that the final results that are merged with thin-plate-splines (TPS) error surfaces were better using the 'smooth.outputs.only=TRUE' (vs. 'smooth.outputs.only=FALSE'). In this case, the TPS could better 'fix' the 'smooth.outputs.only=TRUE' ensemble models. In contrast, the 'smooth.outputs.only=FALSE' ensemble models (without TPS) had a higher r2. This can be due to the TPS not being able to correct error in blocky models. In this example (and all where r2 ensemble > r2 ensemble + TPS), the final model produced was the r2 ensemble (w/o TPS). However, in all cases, the raw ensemble of 'smooth.outputs.only=FALSE' will always be lower than, or equal to, the model run with 'smooth.outputs.only=TRUE'.

BIO13 downscaled in northern Madagascar: example of 'smooth.outputs.only' parameter. Note that the final results that are merged with thin-plate-splines (TPS) error surfaces were better using the 'smooth.outputs.only=TRUE' (vs. 'smooth.outputs.only=FALSE'). In this case, the TPS could better 'fix' the 'smooth.outputs.only=TRUE' ensemble models. In contrast, the 'smooth.outputs.only=FALSE' ensemble models (without TPS) had a higher r2. This can be due to the TPS not being able to correct error in blocky models. In this example (and all where r2 ensemble > r2 ensemble + TPS), the final model produced was the r2 ensemble (w/o TPS). However, in all cases, the raw ensemble of 'smooth.outputs.only=FALSE' will always be lower than, or equal to, the model run with 'smooth.outputs.only=TRUE'.

Example 1 - using provided datasets

library(MACHISPLIN)

library(terra)

## load spatial data with (coordinates named exactly as 'long' and 'lat') and any number of layers to downscale

## note this can be from a raster of lower resolution climate data or point weather station data

data(sampling)

Mydata<-sampling

# load rasters to use as high-resolution covariates for downscaling

ALT = rast(system.file("extdata", "alt.tif", package="MACHISPLIN"))

SLOPE = rast(system.file("extdata", "slope.tif", package="MACHISPLIN"))

TWI = rast(system.file("extdata", "TWI.tif", package="MACHISPLIN"))

# function input: raster brick of covariates

raster_stack<-c(ALT,SLOPE,TWI)

# run an ensemble machine learning thin plate spline

interp.rast<-machisplin.mltps(int.values=Mydata, covar.ras=raster_stack, smooth.outputs.only=FALSE, tps=FALSE)

machisplin.write.geotiff(mltps.in=interp.rast)

machisplin.write.residuals(mltps.in=interp.rast)

machisplin.write.loadings(mltps.in=interp.rast)

Example 2 - typical workflow

see below for help formatting raster/environment data.

library(MACHISPLIN)

library(terra)

# Import a csv as shapefile:

Mydata<-read.delim("sampling.csv", sep=",", h=T)

# load rasters to use as high-resolution covariates for downscaling

ALT = rast("SRTM30m.tif")

SLOPE = rast("ln_slope.tif")

TWI = rast("TWI.tif")

# function input: raster brick of covariates

raster_stack<-c(ALT,SLOPE,TWI)

# run an ensemble machine learning thin plate spline

interp.rast<-machisplin.mltps(int.values=Mydata, covar.ras=raster_stack, smooth.outputs.only=TRUE, tps=TRUE)

# to plot results (change number to select different output raster)

plot(interp.rast[[1]]$final)

# to view residuals (change number to select different output raster)

interp.rast[[1]]$residuals

# to view model loadings

interp.rast[[1]]$var.imp

# to view other model parameters and other parameters

interp.rast[[1]]$summary

Example 3 - a loop

a loop for difficult/large datasets

library(MACHISPLIN)

library(terra)

# Import a csv as shapefile:

Mydata<-read.delim("sampling.csv", sep=",", h=T)

# load rasters to use as high resolution co-variates for downscaling

ALT = terra::rast("SRTM30m.tif")

SLOPE = terra::rast("ln_slope.tif")

ASPECT = terra::rast("aspect.tif")

GEOMORPH = terra::rast("geomorphons.tif")

TWI = terra::rast("TWI.tif")

# function input: raster brick of covarites

raster_stack<-terra::c(ALT,SLOPE,TWI,GEOMORPH, ASPECT)

# n of iterpolations - subtrack x and y

i.lyrs<-ncol(Mydata)-2

# a simple loop to iterate through your input data, and as it finishes layers, it saves them. This is nice in the event of errors

for (i in 1:i.lyrs){

Mydat<-cbind(Mydata[1:2],Mydata[i+2])

interp.rast<-machisplin.mltps(int.values=Mydat, covar.ras=raster_stack, smooth.outputs.only=TRUE, tps=TRUE)

machisplin.write.geotiff(mltps.in=interp.rast)

machisplin.write.residuals(mltps.in=interp.rast)

machisplin.write.loadings(mltps.in=interp.rast)

}

Example 4 - tiling the input landscape/data to downscale very large landscapes

a loop for very large datasets -important: downscaled tiled landscapes built in this manner are not guaranteed to use the same model

library(MACHISPLIN)

library(terra)

# Import a csv as shapefile:

Mydata<-read.delim("sampling.csv", sep=",", h=T)

# load rasters to use as high resolution co-variates for downscaling

ALT = terra::rast("SRTM30m.tif")

SLOPE = terra::rast("ln_slope.tif")

ASPECT = terra::rast("aspect.tif")

GEOMORPH = terra::rast("geomorphons.tif")

TWI = terra::rast("TWI.tif")

# function input: raster brick of covarites

raster_stack<-terra::c(ALT,SLOPE,TWI,GEOMORPH, ASPECT)

# sub-divide landscape into smaller units to facilite downscaling

tile<-machisplin.tiles.create(rast.in= raster_stack, int.values=Mydata,out.ncol=2, out.nrow=2, feather.d=50)

# run an ensemble machine learning thin plate spline - tile 1:4

inte

Related Skills

YC-Killer

2.7kA library of enterprise-grade AI agents designed to democratize artificial intelligence and provide free, open-source alternatives to overvalued Y Combinator startups. If you are excited about democratizing AI access & AI agents, please star ⭐️ this repository and use the link in the readme to join our open source AI research team.

best-practices-researcher

The most comprehensive Claude Code skills registry | Web Search: https://skills-registry-web.vercel.app

groundhog

400Groundhog's primary purpose is to teach people how Cursor and all these other coding agents work under the hood. If you understand how these coding assistants work from first principles, then you can drive these tools harder (or perhaps make your own!).

last30days-skill

19.5kAI agent skill that researches any topic across Reddit, X, YouTube, HN, Polymarket, and the web - then synthesizes a grounded summary