Inseq

Interpretability for sequence generation models 🐛 🔍

Install / Use

/learn @inseq-team/InseqREADME

Inseq is a Pytorch-based hackable toolkit to democratize access to common post-hoc interpretability analyses of sequence generation models.

Installation

Inseq is available on PyPI and can be installed with pip for Python >= 3.10, <= 3.13:

# Install latest stable version

pip install inseq

# Alternatively, install latest development version

pip install git+https://github.com/inseq-team/inseq.git

Install extras for visualization in Jupyter Notebooks and 🤗 datasets attribution as pip install inseq[notebook,datasets].

cd inseq

make uv-download # Download and install the uv package manager

make install # Installs the package and all dependencies via uv sync

For library developers, you can use the make install-dev command to install all development dependencies (quality, docs, extras).

After installation, you should be able to run make fast-test and make lint without errors.

-

Installing the

tokenizerspackage requires a Rust compiler installation. You can install Rust from https://rustup.rs and add$HOME/.cargo/envto your PATH. -

Installing

sentencepiecerequires various packages, install withsudo apt-get install cmake build-essential pkg-configorbrew install cmake gperftools pkg-config.

Example usage in Python

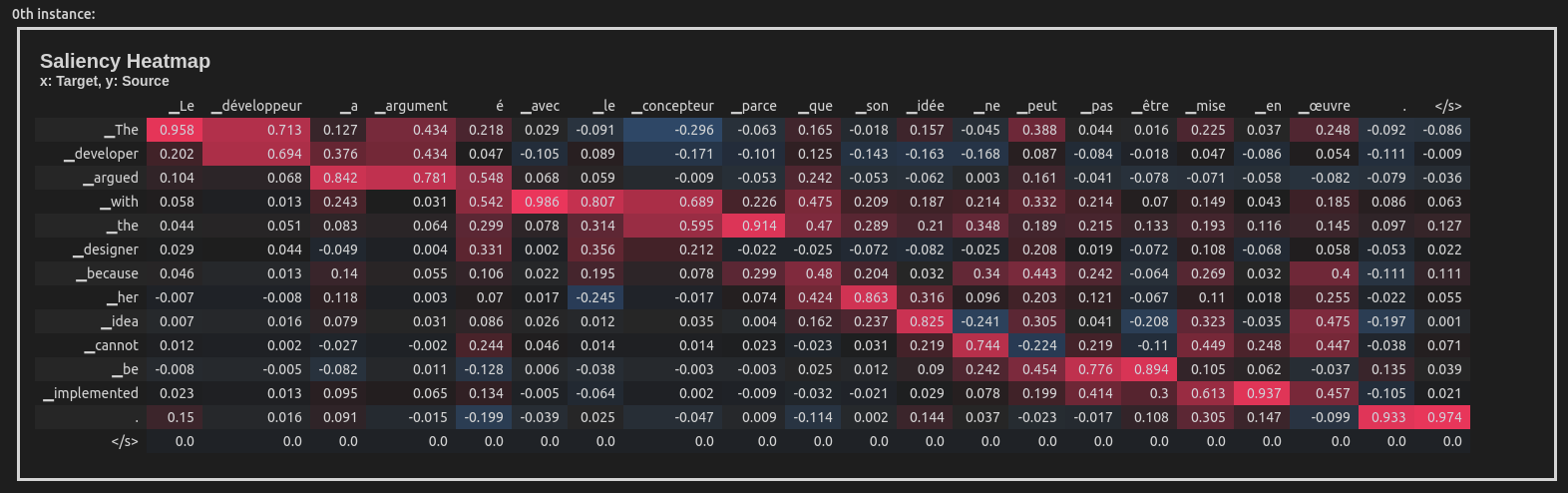

This example uses the Integrated Gradients attribution method to attribute the English-French translation of a sentence taken from the WinoMT corpus:

import inseq

model = inseq.load_model("Helsinki-NLP/opus-mt-en-fr", "integrated_gradients")

out = model.attribute(

"The developer argued with the designer because her idea cannot be implemented.",

n_steps=100

)

out.show()

This produces a visualization of the attribution scores for each token in the input sentence (token-level aggregation is handled automatically). Here is what the visualization looks like inside a Jupyter Notebook:

Inseq also supports decoder-only models such as GPT-2, enabling usage of a variety of attribution methods and customizable settings directly from the console:

import inseq

model = inseq.load_model("gpt2", "integrated_gradients")

model.attribute(

"Hello ladies and",

generation_args={"max_new_tokens": 9},

n_steps=500,

internal_batch_size=50

).show()

Features

-

🚀 Feature attribution of sequence generation for most

ForConditionalGeneration(encoder-decoder) andForCausalLM(decoder-only) models from 🤗 Transformers -

🚀 Support for multiple feature attribution methods, extending the ones supported by Captum

-

🚀 Post-processing, filtering and merging of attribution maps via

Aggregatorclasses. -

🚀 Attribution visualization in notebooks, browser and command line.

-

🚀 Efficient attribution of single examples or entire 🤗 datasets with the Inseq CLI.

-

🚀 Custom attribution of target functions, supporting advanced methods such as contrastive feature attributions and context reliance detection.

-

🚀 Extraction and visualization of custom scores (e.g. probability, entropy) at every generation step alongsides attribution maps.

Supported methods

Use the inseq.list_feature_attribution_methods function to list all available method identifiers and inseq.list_step_functions to list all available step functions. The following methods are currently supported:

Gradient-based attribution

-

saliency: Deep Inside Convolutional Networks: Visualising Image Classification Models and Saliency Maps (Simonyan et al., 2013) -

input_x_gradient: [Deep Inside Convolutional Networks: Visualising Image Classification

Related Skills

YC-Killer

2.7kA library of enterprise-grade AI agents designed to democratize artificial intelligence and provide free, open-source alternatives to overvalued Y Combinator startups. If you are excited about democratizing AI access & AI agents, please star ⭐️ this repository and use the link in the readme to join our open source AI research team.

best-practices-researcher

The most comprehensive Claude Code skills registry | Web Search: https://skills-registry-web.vercel.app

groundhog

398Groundhog's primary purpose is to teach people how Cursor and all these other coding agents work under the hood. If you understand how these coding assistants work from first principles, then you can drive these tools harder (or perhaps make your own!).

isf-agent

a repo for an agent that helps researchers apply for isf funding