LuckysheetServerStarter

LuckysheetServer docker deployment startup template

Install / Use

/learn @dream-num/LuckysheetServerStarterREADME

LuckysheetServerStarter

English| 简体中文

Introduction

💻LuckysheetServer docker deployment startup template.

Demo

- Cooperative editing demo(Note: Please do not operate frequently to prevent the server from crashing)

Tutorial

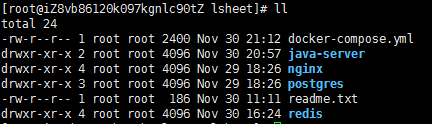

Folder structure

C:\Users\Administrator\Desktop\lsheet>tree /f

│

│ docker-compose.yml #Deployment file

│

├─java-server

│ application-dev.yml #Project configuration

│ application.yml #Project configuration

│ web-lockysheet-server.jar #Project

│

├─nginx

│ │ nginx.conf #nginx configuration

│ │

│ ├─html #nginx static folder

| | index.html # Luckysheet demo

| |

│ └─logs #nginx log folder

├─postgres

│ │ init.sql #postgre initialization file

│ │

│ └─data #postgres data folder

└─redis

│ redis.conf #redis configuration file

│

├─data #redis data folder

└─logs #redis log folder

Requirements

-

Install docker

-

Install docker-compose

-

Install curl

yum install curl

1. Enter folder

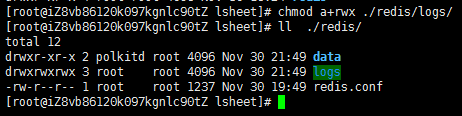

2. Set redis/logs directory permissions

chmod a+rwx ./redis/logs/

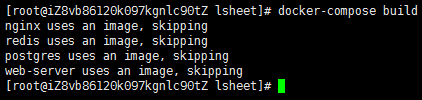

3. Start to build the image

docker-compose build

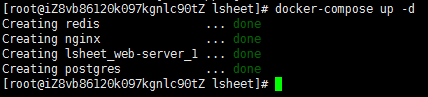

4. Start the container in the background

docker-compose up -d

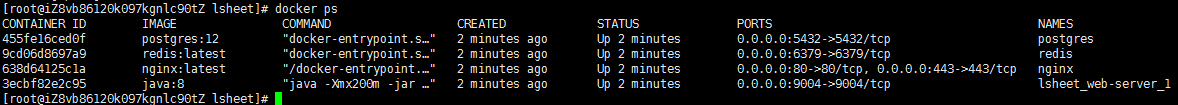

5. View image

docker ps

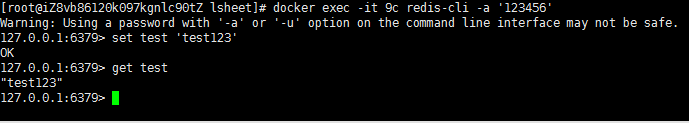

6. verification

- redis verification

docker exec -it [container ID] redis-cli -a '123456'

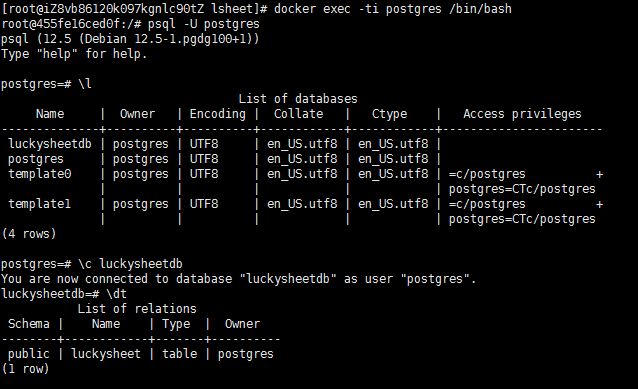

- postgres verification

#Enter the container

docker exec -ti postgres /bin/bash

#Log in to postgres

psql -U postgres

#List all databases

\l

#Switch database

\c luckysheetdb

#List all table names

\dt

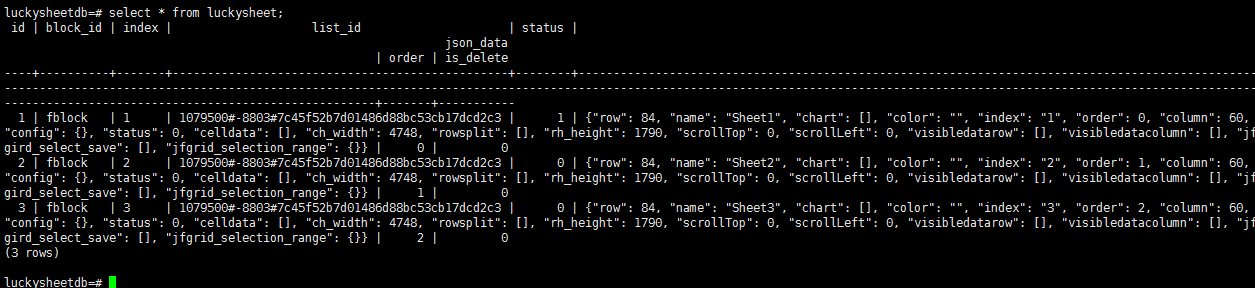

#View table data

select * from luckysheet;

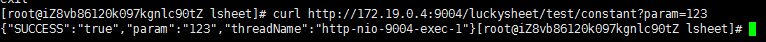

- Verify java application (use test url)

curl http://172.19.0.4:9004/luckysheet/test/constant?param=123

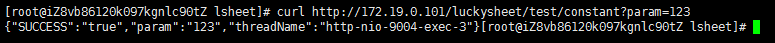

- Verify that nginx accesses the java application

curl http://172.19.0.101/luckysheet/test/constant?param=123

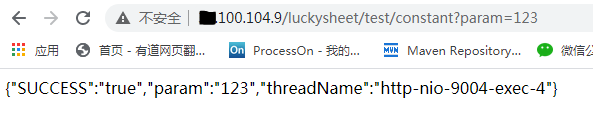

- This example is installed on the cloud server and tested by the browser

http://xx.100.104.9/luckysheet/test/constant?param=123

Visit Luckysheet demo

# static demo

http://xx.100.104.9

# Collaborative editing mode

http://xx.100.104.9?share

7. Configuration file

- nginx configuration

#Running user

#user nobody;

#Number of open processes <= number of CPUs

worker_processes 1;

#Error log save location

error_log /var/log/nginx/error.log;

#Process number save file

pid /var/log/nginx/nginx.pid;

#Waiting for event

events {

#Open under Linux to improve performance

#use epoll;

#Maximum number of connections per process (maximum connection = number of connections x number of processes)

worker_connections 1024;

}

http {

#File extension and file type mapping table

include mime.types;

#Default file type

default_type application/octet-stream;

#Log file output format .This location corresponds to the global setting

log_format main '$remote_addr - $remote_user [$time_local] "$request" '

'$status $body_bytes_sent "$http_referer" '

'"$http_user_agent" "$http_x_forwarded_for"';

#Request log save location

access_log /var/log/nginx/access.log main;

#Open send file

sendfile on;

#tcp_nopush on;

#keepalive_timeout 0;

keepalive_timeout 65;

#Turn on gzip compression

#gzip on;

gzip on;

gzip_min_length 1k;

gzip_buffers 16 64k;

gzip_http_version 1.0;

gzip_comp_level 7;

#DO NOT zip pics

gzip_types text/plain application/x-javascript text/javascript application/x-httpd-php text/css text/xml text/jsp application/eot application/ttf application/otf application/svg application/woff;

gzip_vary on;

gzip_disable "MSIE [1-6].";

#websocket

map $http_upgrade $connection_upgrade {

default upgrade;

'' close;

}

#Set the server list for load balancing

upstream luckysheetserver {

server 172.19.0.4:9004 weight=1;

}

#The first virtual host

server {

#Listening IP port

listen 80;

#Host name

server_name localhost;

#Set character set

#charset koi8-r;

#The access log of this virtual server is equivalent to a local variable

#access_log logs/host.access.log main;

proxy_set_header X-Forwarded-Host $host;

proxy_set_header X-Forwarded-Server $host;

proxy_set_header X-Forwarded-For $proxy_add_x_forwarded_for;

location /luckysheet/websocket/luckysheet {

proxy_pass http://luckysheetserver/luckysheet/websocket/luckysheet;

proxy_set_header Host $host;

proxy_set_header X-real-ip $remote_addr;

proxy_set_header X-Forwarded-For $proxy_add_x_forwarded_for;

proxy_connect_timeout 1800s;

proxy_read_timeout 600s;

proxy_send_timeout 600s;

#websocket

proxy_http_version 1.1;

proxy_set_header Upgrade $http_upgrade;

proxy_set_header Connection "upgrade";

}

location /luckysheet/ {

proxy_pass http://luckysheetserver;

proxy_connect_timeout 1800;

proxy_read_timeout 600;

}

location / {

root /usr/share/nginx/html/;

index index.html index.htm;

proxy_connect_timeout 1800;

proxy_read_timeout 600;

#websocket

proxy_http_version 1.1;

proxy_set_header Upgrade $http_upgrade;

proxy_set_header Connection "upgrade";

}

#Dynamic and static separation

location ~ .*\.(html|js|css|jpg|txt)?$ {

root /usr/share/nginx/html/;

#expires 3d;

}

error_page 500 502 503 504 /50x.html;

location = /50x.html {

root /usr/share/nginx/html/;

}

}

}

- redis configuration

bind *

protected-mode no

port 6379

tcp-backlog 511

timeout 0

tcp-keepalive 300

supervised no

pidfile /usr/local/redis/redis.pid

loglevel notice

logfile /usr/local/redis/logs.log

databases 16

save 900 1

save 300 10

save 60 10000

stop-writes-on-bgsave-error yes

rdbcompression yes

rdbchecksum yes

dbfilename dump.rdb

dir /usr/local/redis/data/

slave-serve-stale-data yes

repl-diskless-sync no

repl-diskless-sync-delay 5

repl-disable-tcp-nodelay no

slave-priority 100

maxmemory 500mb

maxmemory-policy noeviction

appendonly no

appendfilename "appendonly.aof"

appendfsync everysec

no-appendfsync-on-rewrite no

auto-aof-rewrite-percentage 100

auto-aof-rewrite-min-size 64mb

aof-load-truncated yes

lua-time-limit 5000

slowlog-log-slower-than 10000

slowlog-max-len 128

latency-monitor-threshold 0

notify-keyspace-events ""

hash-max-ziplist-entries 512

hash-max-ziplist-value 64

list-max-ziplist-size -2

list-compress-depth 0

set-max-intset-entries 512

zset-max-ziplist-entries 128

zset-max-ziplist-value 64

hll-sparse-max-bytes 3000

activerehashing yes

client-output-buffer-limit normal 0 0 0

client-output-buffer-limit slave 256mb 64mb 60

client-output-buffer-limit pubsub 32mb 8mb 60

hz 10

aof-rewrite-incremental-fsync yes

requirepass 123456

- postgres initialization script

CREATE DATABASE luckysheetdb;

\c luckysheetdb;

DROP SEQUENCE IF EXISTS "public"."luckysheet_id_seq";

CREATE SEQUENCE "public"."luckysheet_id_seq"

INCREMENT 1

MINVALUE 1

MAXVALUE 9999999999999

START 1

CACHE 10;

DROP TABLE IF EXISTS "public"."luckysheet";

CREATE TABLE "luckysheet" (

"id" int8 NOT NULL,

"block_id" varchar(200) COLLATE "pg_catalog"."default" NOT NULL,

"index" varchar(200) COLLATE "pg_catalog"."default" NOT NULL,

"list_id" varchar(200) COLLATE "pg_catalog"."default" NOT NULL,

"status" int2 NOT NULL,

"json_data" jsonb,

"order" int2,

"is_delete" int2

);

CREATE INDEX "block_id" ON "public"."luckysheet" USING btree (

"block_id" COLLATE "pg_catalog"."default" "pg_catalog"."text_ops" ASC NULLS LAST,

"list_id" COLLATE "pg_catalog"."default" "pg_catalog"."text_ops" ASC NULLS LAST

);

CREATE INDEX "index" ON "public"."luckysheet" USING btree (

"index" COLLATE "pg_catalog"."default" "pg_catalog"."text_ops" ASC NULLS LAST,

"list_id" COLLATE "pg_catalog"."default" "pg_catalog"."text_ops" ASC NULLS LAST

);

CREATE INDEX "is_delete" ON "public"."luckysheet" USING btree (

"is_delete" "pg_catalog"."int2_ops" ASC NULLS LAST

);

CREATE INDEX "list_id" ON "public"."luckysheet" USING btree (

"list_id" COLLATE "pg_catalog"."default" "pg_catalog"."text_ops" ASC NULLS LAST

);

CREATE INDEX "or