Top2Vec

Top2Vec learns jointly embedded topic, document and word vectors.

Install / Use

/learn @ddangelov/Top2VecREADME

Contextual Top2Vec Overview

Paper: Topic Modeling: Contextual Token Embeddings Are All You Need

The Top2Vec library now supports a new contextual version, allowing for deeper topic modeling capabilities. Contextual Top2Vec, enables the model to generate contextual token embeddings for each document, identifying multiple topics per document and even detecting topic segments within a document. This enhancement is useful for capturing a nuanced understanding of topics, especially in documents that cover multiple themes.

Key Features of Contextual Top2Vec

contextual_top2vecflag: A new parameter,contextual_top2vec, is added to the Top2Vec class. When set toTrue, the model uses contextual token embeddings. Only the following embedding models are supported:all-MiniLM-L6-v2all-mpnet-base-v2

- Topic Spans: C-Top2Vec automatically determines the number of topics and finds topic segments within documents, allowing for a more granular topic discovery.

Simple Usage Example

Here is a simple example of how to use Contextual Top2Vec:

from top2vec import Top2Vec

# Create a Contextual Top2Vec model

top2vec_model = Top2Vec(documents=documents,

ngram_vocab=True,

contextual_top2vec=True)

New Methods for Contextual Top2Vec

get_document_topic_distribution()

get_document_topic_distribution() -> np.ndarray

- Description: Retrieves the topic distribution for each document.

- Returns: A

numpy.ndarrayof shape(num_documents, num_topics). Each row represents the probability distribution of topics for a document.

get_document_topic_relevance()

get_document_topic_relevance() -> np.ndarray

- Description: Provides the relevance of each topic for each document.

- Returns: A

numpy.ndarrayof shape(num_documents, num_topics). Each row indicates the relevance scores of topics for a document.

get_document_token_topic_assignment()

get_document_token_topic_assignment() -> List[Document]

- Description: Retrieves token-level topic assignments for each document.

- Returns: A list of

Documentobjects, each containing topics with token assignments and scores for each token.

get_document_tokens()

get_document_tokens() -> List[List[str]]

- Description: Returns the tokens for each document.

- Returns: A list of lists where each sublist contains the tokens for a given document.

Usage Note

The contextual version of Top2Vec requires specific embedding models, and the new methods provide insights into the distribution, relevance, and assignment of topics at both the document and token levels, allowing for a richer understanding of the data.

Warning: Contextual Top2Vec is still in beta. You may encounter issues or unexpected behavior, and the functionality may change in future updates.

Citation

@inproceedings{angelov-inkpen-2024-topic,

title = "Topic Modeling: Contextual Token Embeddings Are All You Need",

author = "Angelov, Dimo and

Inkpen, Diana",

editor = "Al-Onaizan, Yaser and

Bansal, Mohit and

Chen, Yun-Nung",

booktitle = "Findings of the Association for Computational Linguistics: EMNLP 2024",

month = nov,

year = "2024",

address = "Miami, Florida, USA",

publisher = "Association for Computational Linguistics",

url = "https://aclanthology.org/2024.findings-emnlp.790",

pages = "13528--13539",

abstract = "The goal of topic modeling is to find meaningful topics that capture the information present in a collection of documents. The main challenges of topic modeling are finding the optimal number of topics, labeling the topics, segmenting documents by topic, and evaluating topic model performance. Current neural approaches have tackled some of these problems but none have been able to solve all of them. We introduce a novel topic modeling approach, Contextual-Top2Vec, which uses document contextual token embeddings, it creates hierarchical topics, finds topic spans within documents and labels topics with phrases rather than just words. We propose the use of BERTScore to evaluate topic coherence and to evaluate how informative topics are of the underlying documents. Our model outperforms the current state-of-the-art models on a comprehensive set of topic model evaluation metrics.",

}

Classic Top2Vec

Top2Vec is an algorithm for topic modeling and semantic search. It automatically detects topics present in text and generates jointly embedded topic, document and word vectors. Once you train the Top2Vec model you can:

- Get number of detected topics.

- Get topics.

- Get topic sizes.

- Get hierarchichal topics.

- Search topics by keywords.

- Search documents by topic.

- Search documents by keywords.

- Find similar words.

- Find similar documents.

- Expose model with RESTful-Top2Vec

See the paper for more details on how it works.

Benefits

- Automatically finds number of topics.

- No stop word lists required.

- No need for stemming/lemmatization.

- Works on short text.

- Creates jointly embedded topic, document, and word vectors.

- Has search functions built in.

How does it work?

The assumption the algorithm makes is that many semantically similar documents are indicative of an underlying topic. The first step is to create a joint embedding of document and word vectors. Once documents and words are embedded in a vector space the goal of the algorithm is to find dense clusters of documents, then identify which words attracted those documents together. Each dense area is a topic and the words that attracted the documents to the dense area are the topic words.

The Algorithm:

1. Create jointly embedded document and word vectors using Doc2Vec or Universal Sentence Encoder or BERT Sentence Transformer.

<!----> <p align="center"> <img src="https://raw.githubusercontent.com/ddangelov/Top2Vec/master/images/doc_word_embedding.svg?sanitize=true" alt="" width=600 height="whatever"> </p>Documents will be placed close to other similar documents and close to the most distinguishing words.

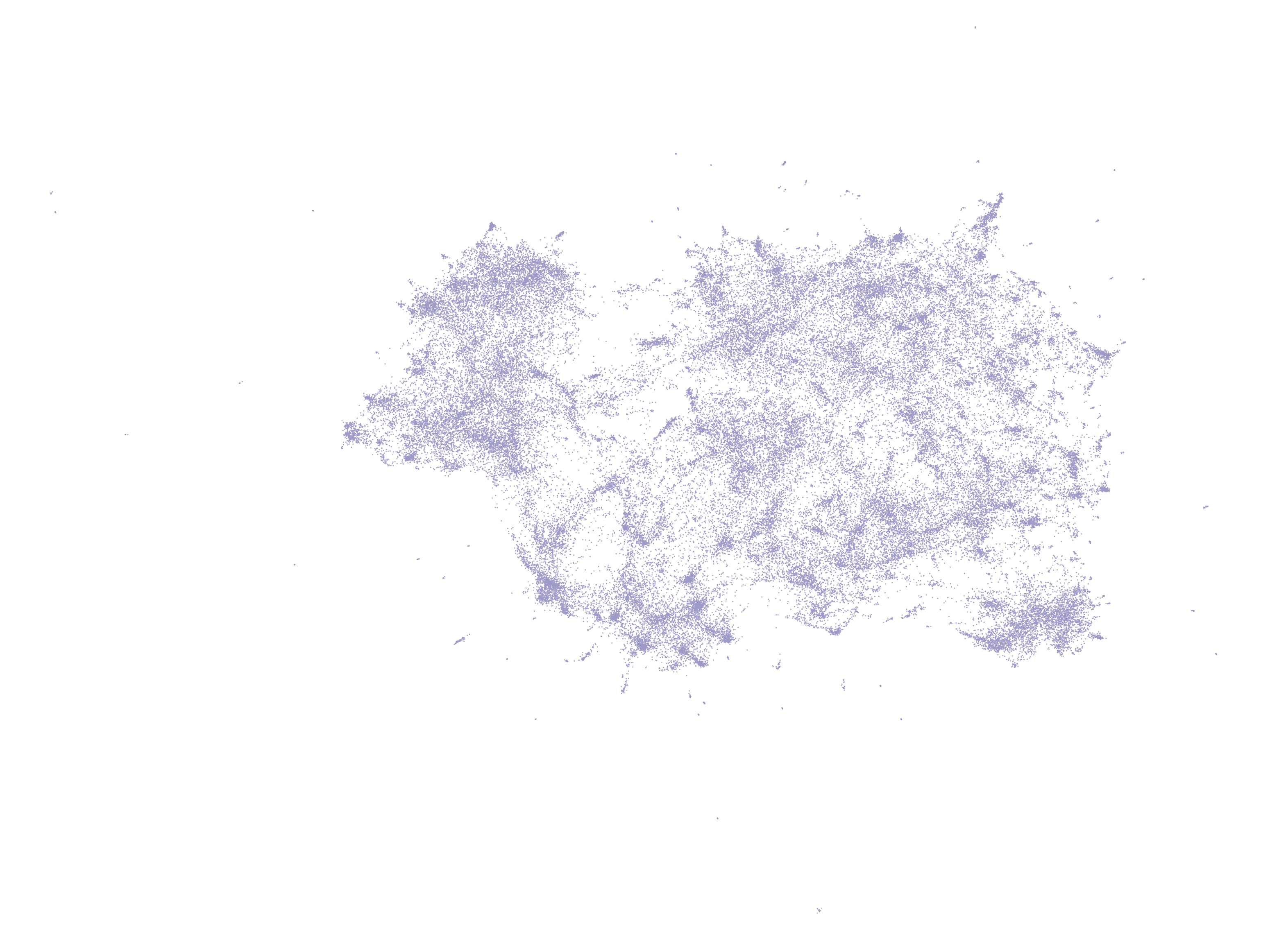

2. Create lower dimensional embedding of document vectors using UMAP.

<!----> <p align="center"> <img src="https://raw.githubusercontent.com/ddangelov/Top2Vec/master/images/umap_docs.png" alt="" width=700 height="whatever"> </p>Document vectors in high dimensional space are very sparse, dimension reduction helps for finding dense areas. Each point is a document vector.

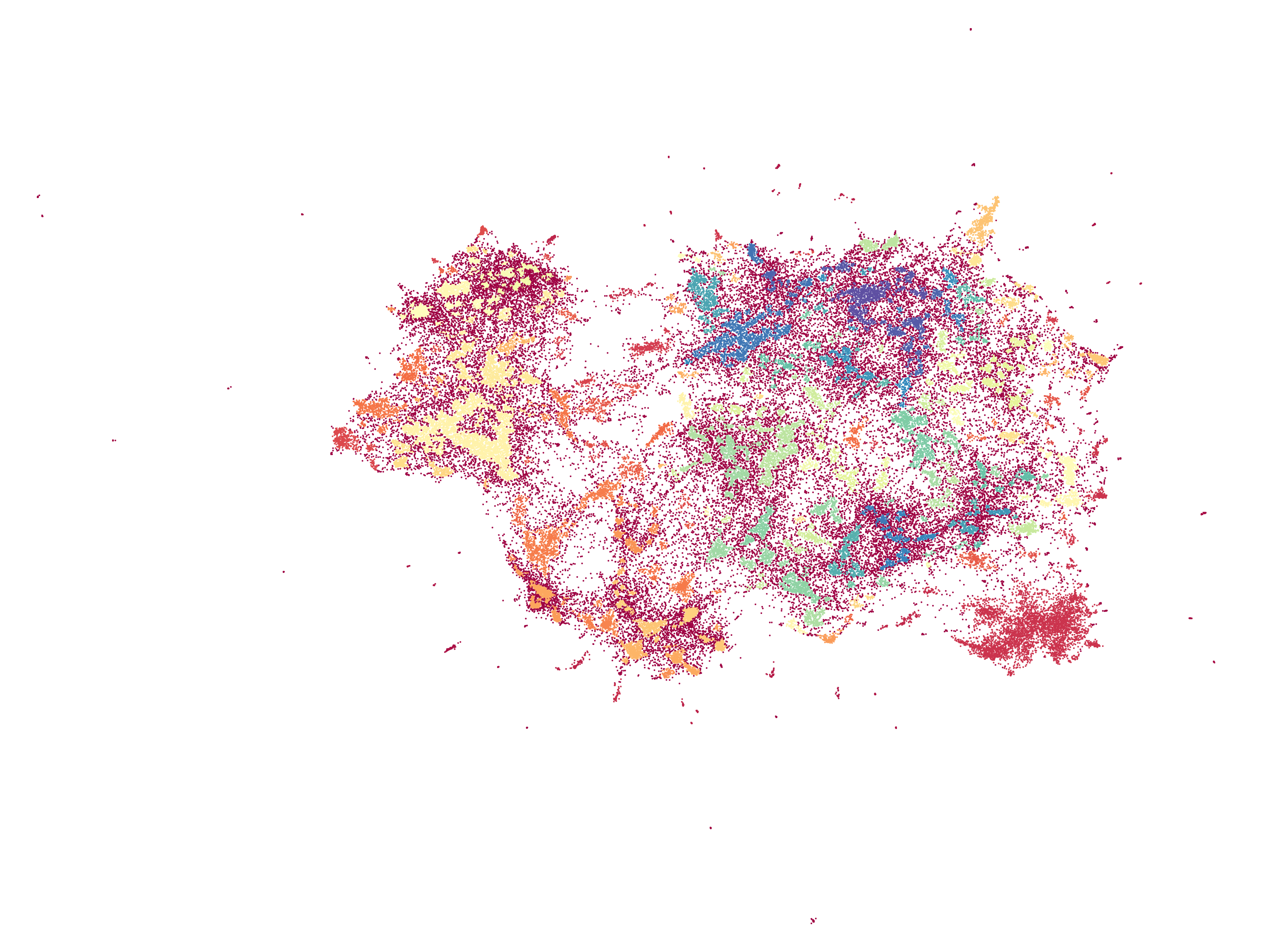

3. Find dense areas of documents using HDBSCAN.

<!----> <p align="center"> <img src="https://raw.githubusercontent.com/ddangelov/Top2Vec/master/images/hdbscan_docs.png" alt="" width=700 height="whatever"> </p>The colored areas are the dense areas of documents. Red points are outliers that do not belong to a specific cluster.

4. For each dense area calculate the centroid of document vectors in original dimension, this is the topic vector.

<!----> <p align="center"> <img src="https://raw.githubusercontent.com/ddangelov/Top2Vec/master/images/topic_vector.svg?sanitize=true" alt="" width=600 height="whatever"> </p>The red points are outlier documents and do not get used for calculating the topic vector. The purple points are the document vectors that belong to a dense area, from which the topic vector is calculated.

5. Find n-closest word vectors to the resulting topic vector.

<!----> <p align="center"> <img src="https://raw.githubusercontent.com/ddangelov/Top2Vec/master/images/topic_words.svg?sanitize=true" alt="" width=600 height="whatever"> </p>The closest word vectors in order of proximity become the topic words.

Installation

The easy way to install Top2Vec is:

pip install top2vec

To install pre-trained universal sentence encoder options:

pip install top2vec[sentence_encoders]

To install pre-trained BERT sentence transformer options:

pip install top2vec[sentence_transformers]

To install indexing options:

pip install top2vec[indexing]

Usage

from top2vec import top2vec

model = Top2Vec(documents)

Important parameters:

-

documents: Input corpus, should be a list of strings. -

speed: This parameter will determine how fast the mod