Cleanlab

Cleanlab's open-source library is the standard data-centric AI package for data quality and machine learning with messy, real-world data and labels.

Install / Use

/learn @cleanlab/CleanlabREADME

Cleanlab’s open-source library helps you clean data and labels by automatically detecting issues in a ML dataset. To facilitate machine learning with messy, real-world data, this data-centric AI package uses your existing models to estimate dataset problems that can be fixed to train even better models.

<p align="center"> <img src="https://raw.githubusercontent.com/cleanlab/assets/master/cleanlab/datalab_issues.png" width=74%> </p> <p align="center"> Examples of various issues in Cat/Dog dataset <b>automatically detected</b> by cleanlab via this code: </p> lab = cleanlab.Datalab(data=dataset, label="column_name_for_labels")

# Fit any ML model, get its feature_embeddings & pred_probs for your data

lab.find_issues(features=feature_embeddings, pred_probs=pred_probs)

lab.report()

- Use cleanlab to automatically check every: text, audio, image, or tabular dataset.

- Use cleanlab to automatically: detect data issues (outliers, duplicates, label errors, etc), train robust models, infer consensus + annotator-quality for multi-annotator data, suggest data to (re)label next (active learning).

Run cleanlab open-source

This cleanlab package runs on Python 3.10+ and supports Linux, macOS, as well as Windows.

- Get started here! Install via

uv,pip, orconda. - Developers who install the bleeding-edge from source should refer to this master branch documentation.

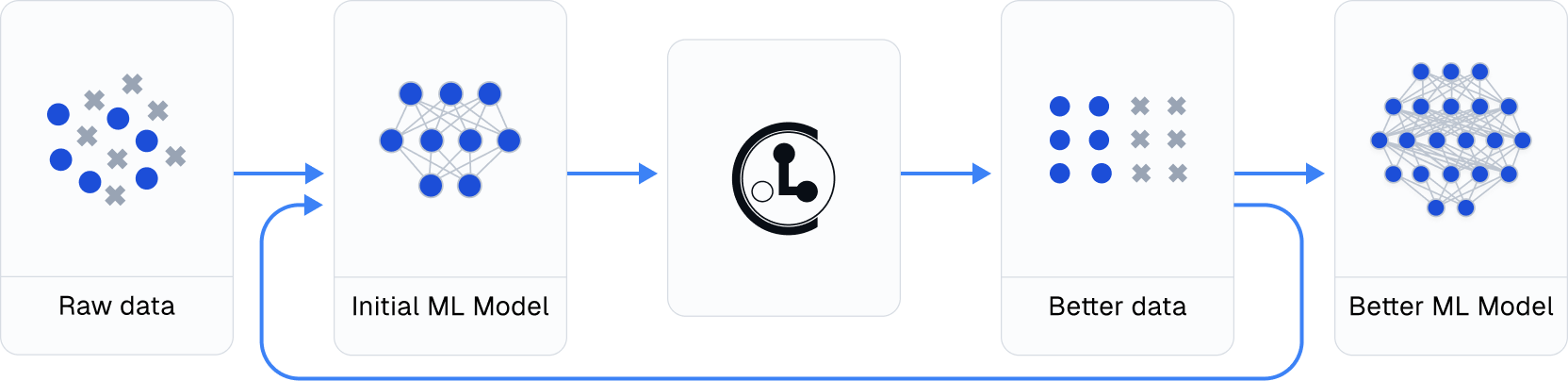

Practicing data-centric AI can look like this:

- Train initial ML model on original dataset.

- Utilize this model to diagnose data issues (via cleanlab methods) and improve the dataset.

- Train the same model on the improved dataset.

- Try various modeling techniques to further improve performance.

Most folks jump from Step 1 → 4, but you may achieve big gains without any change to your modeling code by using cleanlab! Continuously boost performance by iterating Steps 2 → 4 (and try to evaluate with cleaned data).

Use cleanlab with any model and in most ML tasks

All features of cleanlab work with any dataset and any model. Yes, any model: PyTorch, Tensorflow, Keras, JAX, HuggingFace, OpenAI, XGBoost, scikit-learn, etc.

cleanlab is useful across a wide variety of Machine Learning tasks. Specific tasks this data-centric AI package offers dedicated functionality for include:

- Binary and multi-class classification

- Multi-label classification (e.g. image/document tagging)

- Token classification (e.g. entity recognition in text)

- Regression (predicting numerical column in a dataset)

- Image segmentation (images with per-pixel annotations)

- Object detection (images with bounding box annotations)

- Classification with data labeled by multiple annotators

- Active learning with multiple annotators (suggest which data to label or re-label to improve model most)

- Outlier detection (identify atypical data that appears out of distribution)

For other ML tasks, cleanlab can still help you improve your dataset if appropriately applied. See our Example Notebooks and Blog.

So fresh, so cleanlab

Beyond automatically catching all sorts of issues lurking in your data, this data-centric AI package helps you deal with noisy labels and train more robust ML models. Here's an example:

# cleanlab works with **any classifier**. Yup, you can use PyTorch/TensorFlow/OpenAI/XGBoost/etc.

cl = cleanlab.classification.CleanLearning(sklearn.YourFavoriteClassifier())

# cleanlab finds data and label issues in **any dataset**... in ONE line of code!

label_issues = cl.find_label_issues(data, labels)

# cleanlab trains a robust version of your model that works more reliably with noisy data.

cl.fit(data, labels)

# cleanlab estimates the predictions you would have gotten if you had trained with *no* label issues.

cl.predict(test_data)

# A universal data-centric AI tool, cleanlab quantifies class-level issues and overall data quality, for any dataset.

cleanlab.dataset.health_summary(labels, confident_joint=cl.confident_joint)

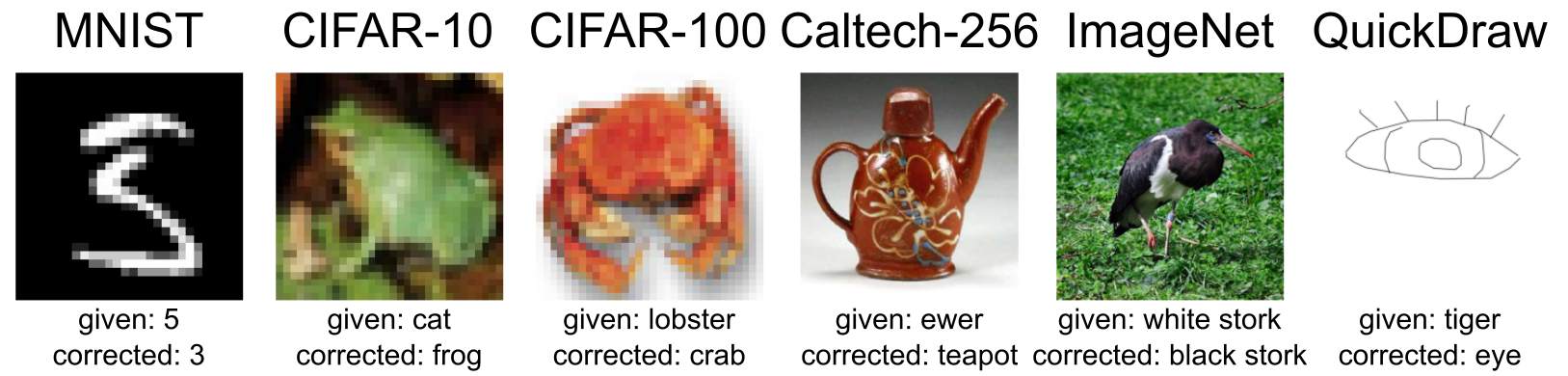

cleanlab cleans your data's labels via state-of-the-art confident learning algorithms, published in this paper and blog. See some of the datasets cleaned with cleanlab at labelerrors.com.

cleanlab is:

- backed by theory -- with provable guarantees of exact label noise estimation, even with imperfect models.

- fast -- code is parallelized and scalable.

- easy to use -- one line of code to find mislabeled data, bad annotators, outliers, or train noise-robust models.

- general -- works with any dataset (text, image, tabular, audio,...) + any model (PyTorch, OpenAI, XGBoost,...) <br/>

Citation and related publications

cleanlab is based on peer-reviewed research. Here are relevant papers to cite if you use this package:

<details><summary><a href="https://arxiv.org/abs/1911.00068">Confident Learning (JAIR '21)</a> (<b>click to show bibtex</b>) </summary>@article{northcutt2021confidentlearning,

title={Confident Learning: Estimating Uncertainty in Dataset Labels},

author={Curtis G. Northcutt and Lu Jiang and Isaac L. Chuang},

journal={Journal of Artificial Intelligence Research (JAIR)},

volume={70},

pages={1373--1411},

year={2021}

}

@inproceedings{northcutt2017rankpruning,

author={Northcutt, Curtis G. and Wu, Tailin and Chuang, Isaac L.},

title={Learning with Confident Examples: Rank Pruning for Robust Classification with Noisy Labels},

booktitle = {Proceedings of the Thirty-Third Conference on Uncertainty in Artificial Intelligence},

series = {UAI'17},

year = {2017},

location = {Sydney, Australia},

numpages = {10},

url = {http://auai.org/uai2017/proceedings/papers/35.pdf},

publisher = {AUAI Press},

}

@inproceedings{kuan2022labelquality,

title={Model-agnostic label quality scoring to detect real-world label errors},

author={Kuan, Johnson and Mueller, Jonas},

booktitle={ICML DataPerf Workshop},

year={2022}

}