PlaSma

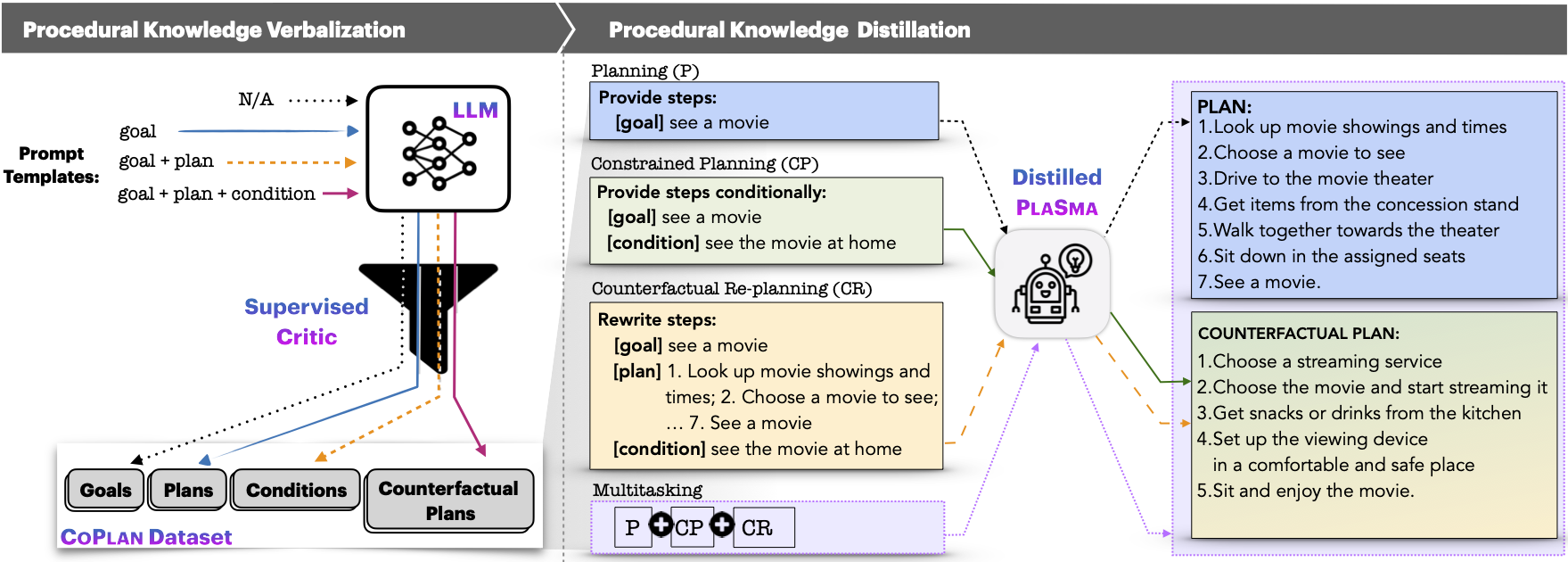

This is a repository for paper titled, PlaSma: Making Small Language Models Better Procedural Knowledge Models for (Counterfactual) Planning

Install / Use

/learn @allenai/PlaSmaREADME

PlaSma

This is a repository for paper titled: PlaSma: Making Small Language Models Better Procedural Knowledge Models for (Counterfactual) Planning [paper]

Authors:

Faeze Brahman, Chandra Bhagavatula, Valentina Pyatkin, Jena D. Hwang, Xiang Lorraine Li, Hirona J. Arai, Soumya Sanyal, Keisuke Sakaguchi, Xiang Ren, Yejin Choi

Installation:

conda env create -f environment.yml

conda activate plasma

pip install torch==1.8.2 torchvision==0.9.2 torchaudio==0.8.2 --extra-index-url https://download.pytorch.org/whl/lts/1.8/cu111

1. CoPlan Dataset

Please find the CoPlan dataset with additional details in data/CoPlan/ directory.

2. Procedural Symbolic Knowledge Distillation

For distilling goal-based planning, run:

cd distillation

bash run_distill.sh

For constrained and counterfactual (re)planning tasks, format the input json file and accordingly modify DATA_DIR, --source_prefix (T5-based models are recommended to have it), --text_column (input field), and --summary_column (output field) in the bash file.

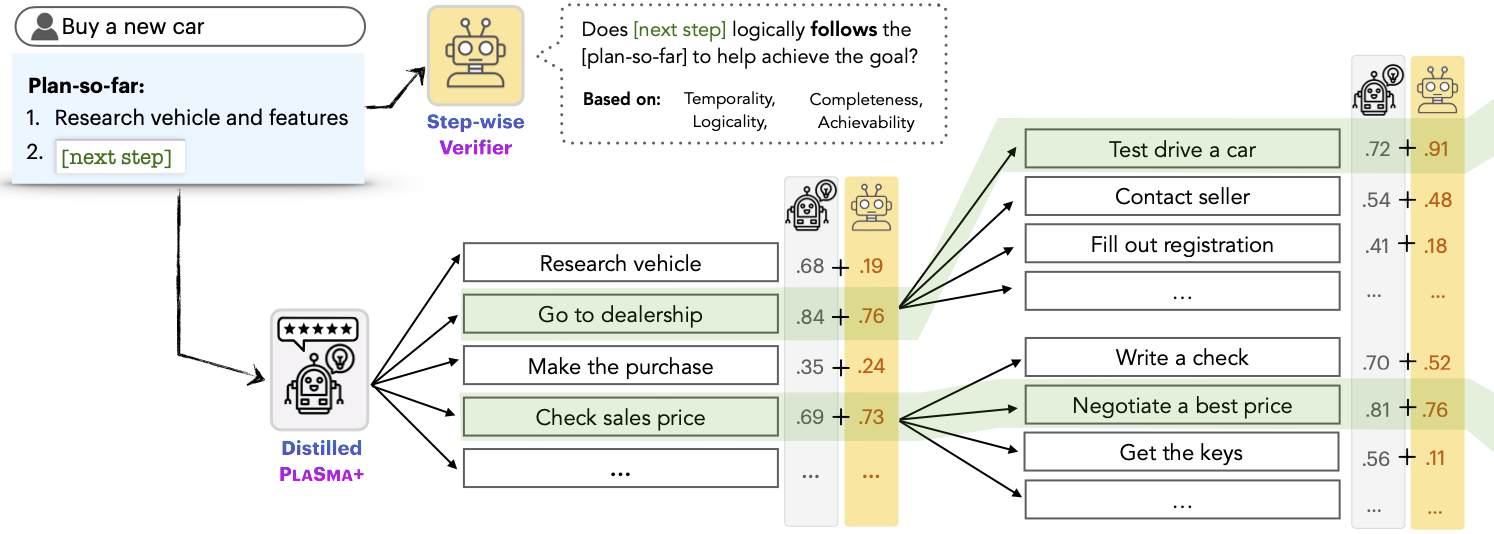

3. Verifier-guided Decoding

-

Download multitask model checkpoint from here and verifier checkpoint from here.

-

Change this to load your goals/conditions from a file (instead of interactive generation).

-

Run the following command for conditional planning task:

cd verifier_guided_decoding

python verifier_guided_generation.py --task conditional-multi --alpha 0.75 --beta 0.25 --model_path <MODEL_CKPT_PATH> --classification_model_path <VERIFIER_CKPT_PATH>

run python verifier_guided_generation.py --help to knonw more about for additional parameters.

TODO:

- add support/details for all tasks in verifier guided decoding (working on instruction)

- provide models' checkpoints for all 3 single tasks and multitask T5-11B based models

- provide demo

Citation

If you find our paper/dataset/code helpful please cite us using:

@article{Brahman2023PlaSma,

author = {Faeze Brahman, Chandra Bhagavatula, Valentina Pyatkin, Jena D. Hwang, Xiang Lorraine Li, Hirona J. Arai, Soumya Sanyal, Keisuke Sakaguchi, Xiang Ren, Yejin Choi},

journal = {ArXiv preprint},

title = {PlaSma: Making Small Language Models Better Procedural Knowledge Models for (Counterfactual) Planning},

url = {https://arxiv.org/abs/2305.19472},

year = {2023}

}

Related Skills

node-connect

354.3kDiagnose OpenClaw node connection and pairing failures for Android, iOS, and macOS companion apps

frontend-design

112.3kCreate distinctive, production-grade frontend interfaces with high design quality. Use this skill when the user asks to build web components, pages, or applications. Generates creative, polished code that avoids generic AI aesthetics.

openai-whisper-api

354.3kTranscribe audio via OpenAI Audio Transcriptions API (Whisper).

qqbot-media

354.3kQQBot 富媒体收发能力。使用 <qqmedia> 标签,系统根据文件扩展名自动识别类型(图片/语音/视频/文件)。