FusionFly

FusionFly is an open-source toolkit for standardizing GNSS (Global Navigation Satellite System) and IMU (Inertial Measurement Unit) data with Factor Graph Optimization (FGO). The system provides a modern web interface for uploading, processing, visualizing, and downloading standardized navigation data.

Install / Use

/learn @ada-jl4025/FusionFlyREADME

FusionFly: A Scalable Open-Source Framework for AI-Powered Positioning Data Standardization

FusionFly is an open-source toolkit for processing and fusing GNSS (Global Navigation Satellite System) and IMU (Inertial Measurement Unit) data with Factor Graph Optimization (FGO). The system provides a modern web interface for uploading, processing, visualizing, and downloading standardized navigation data.

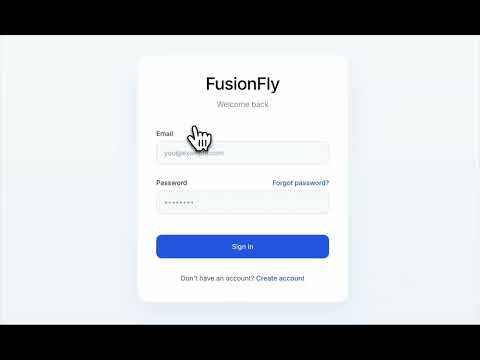

Demo

Check out the FusionFly demo video to see the system in action:

You can also:

- Download the Demo Video directly from the repository

Note: After cloning the repository, you can find the demo video in the public/assets directory.

System Architecture

FusionFly follows a standard client-server architecture with a React frontend, Express.js backend, and Redis job queue for processing large files.

┌─────────────────┐ ┌─────────────────┐ ┌─────────────────┐

│ │ │ │ │ │

│ React Frontend │◄────────►│ Express Backend│◄────────►│ Redis Queue │

│ │ HTTP │ │ Jobs │ │

└────────┬────────┘ └────────┬────────┘ └────────┬────────┘

│ │ │

│ │ │

▼ ▼ ▼

┌─────────────────┐ ┌─────────────────┐ ┌─────────────────┐

│ User Interface │ │ File Storage │ │ Data Processing│

│ - File Upload │ │ - Raw Files │ │ - Conversion │

│ - Visualization│ │ - Processed │ │ - FGO │

│ - Downloads │ │ - Results │ │ - Validation │

└─────────────────┘ └─────────────────┘ └─────────────────┘

Data Standardization Pipeline

FusionFly processes data through a standardization pipeline:

┌───────────┐ ┌────────────┐ ┌───────────────┐ ┌───────────┐ ┌──────────────┐ ┌─────────────┐

│ │ │ │ │ │ │ │ │ │ │ │

│ Detect │────►│ Process via│────►│ AI-Assisted │────►│ Conversion│────►│ Schema │────►│ Schema │

│ Format │ │ Standard │ │ Parsing │ │ Validation│ │ Conversion │ │ Validation │

│ │ │ Script │ │ (if needed) │ │ │ │ │ │ │

└───────────┘ └────────────┘ └───────────────┘ └───────────┘ └──────────────┘ └─────────────┘

│ │ │ │ │

│ │ │ │ │

▼ ▼ ▼ ▼ ▼

┌────────────────────────────────────────────────────────────────────────────────────────┐

│ │

│ Automated Feedback Loop │

│ │

└────────────────────────────────────────────────────────────────────────────────────────┘

-

Detect Format

- Analyzes file extension and content to determine the data format

- Identifies the appropriate processing pathway

-

Try with standard script:

- RINEX (.obs files)

- Processes using georinex library

- Converts to standardized format with timestamps

- Uses AI-assisted parsing if standard conversion fails

- NMEA (.nmea files)

- Processes NMEA sentences using pynmea2

- Handles common message types (GGA, RMC)

- Extracts timestamps and coordinates

- Uses AI-assisted parsing when needed

- Unknown Formats

- Analyzes file content to determine structure

- Generates appropriate conversion logic

- Extracts relevant location data

- RINEX (.obs files)

-

AI-Assisted Parsing

- If standard script is not working, it will call Azure OpenAI service with a snippet of data

- Executes the generated conversion code automatically in the backend

- Provides detailed error information to improve subsequent attempts

LLM Robustness Features

FusionFly implements robust validation and fallback mechanisms for each LLM step in the AI-assisted conversion pipeline:

Format Conversion (First LLM)

- Script Generation: LLM generates a complete Node.js script to process the input file

- Validation: System executes the generated script and validates that output conforms to expected JSONL format

- Fallback Mechanism:

- When the script fails to execute or produces invalid output, the system captures specific errors

- Error details are fed back to the LLM in a structured format for improved retry

- The LLM is instructed to fix specific issues in its subsequent script generation attempt

- System makes up to 3 attempts with increasingly detailed error feedback

Location Extraction (Second LLM)

- Script Generation: LLM generates a specialized Node.js script to extract location data from the first-stage output

- Validation: System executes the script and validates coordinates (latitude/longitude in correct ranges), timestamps, and required field presence

- Fallback Mechanism:

- Detects script execution errors or missing/invalid location data in extraction output

- Provides field-specific guidance to the LLM about conversion issues

- Includes examples of proper formatting in error feedback

- Retries with progressive reinforcement learning pattern

Schema Conversion (Third LLM) - Enhanced Two-Submodule Approach

-

Submodule 1: Direct Schema Conversion

- Takes a small sample of data from Module 2 (location data)

- Directly converts it to the target schema format without generating code

- Validates the converted sample against schema requirements

- Produces a set of high-quality examples to guide the second submodule

- Prompt focuses on direct data transformation, not code generation

-

Submodule 2: Transformation Script Generation

- Takes both raw data and the converted examples from Submodule 1

- Generates a Node.js transformation script based on the pattern shown in the examples

- Script is executed to process the entire dataset

- Output is validated against schema requirements

- Prompt includes both input data and properly formatted examples for pattern matching

-

Enhanced Validation and Feedback Flow:

- If Submodule 1 fails, the entire process fails (since examples are required for Submodule 2)

- Detailed error messages identify specific schema non-compliance issues

- Output from both submodules is validated against target schema

- Retries with improved instructions based on specific validation failures

Third LLM Pipeline Architecture

The detailed architecture of the enhanced third LLM module is illustrated below:

This diagram shows how the system first attempts direct schema conversion with Submodule 1, then uses both the original data and converted examples to generate a transformation script with Submodule 2. The script is then executed to process the entire dataset, with validation at each step ensuring compliance with the pre-defined schema.

The complete data transformation pipeline across all three modules is shown here:

This end-to-end view shows how each module contributes to the overall transformation process, from format conversion through location extraction to the final schema conversion.

Unit Testing

- Comprehensive test suite covers each LLM step with:

- Happy path tests with valid inputs and expected outputs

- Error handling tests with malformed inputs

- Edge case tests (empty files, missing fields, etc.)

- API error simulation and recovery tests

- Validation and fallback mechanism tests

Error Feedback Loop

- The entire pipeline implements a closed feedback loop where:

- Each step validates the output of the previous step

- Validation errors are captured in detail

- Structured error information guides the next LLM attempt

- System learns from previous failures to improve conversion quality

- Detailed logs are maintained for debugging and improvement

This multi-layer validation and fallback approach ensures robust processing even with challenging or unusual data formats, significantly improving the reliability of the AI-assisted conversion pipeline.

-

Conversion Validation

- Runs comprehensive unit tests on the converted data

- Validates correct JSONL formatting and data integrity

- If validation fails, feeds the error data to the LLM

- Regenerates conversion scripts up to 10 times until correctly converted to JSONL

-

Schema Conversion

- After converting

Related Skills

node-connect

354.0kDiagnose OpenClaw node connection and pairing failures for Android, iOS, and macOS companion apps

frontend-design

112.2kCreate distinctive, production-grade frontend interfaces with high design quality. Use this skill when the user asks to build web components, pages, or applications. Generates creative, polished code that avoids generic AI aesthetics.

openai-whisper-api

354.0kTranscribe audio via OpenAI Audio Transcriptions API (Whisper).

qqbot-media

354.0kQQBot 富媒体收发能力。使用 <qqmedia> 标签,系统根据文件扩展名自动识别类型(图片/语音/视频/文件)。