WebAR.rocks.train

Object detection, tracking, and 6DoF pose estimation in the web browser, integrated training environment to train your own neural network models 🚀

Install / Use

/learn @WebAR-rocks/WebAR.rocks.trainREADME

WebAR.rocks.train

Object detection, tracking, and 6DoF pose estimation in web browser

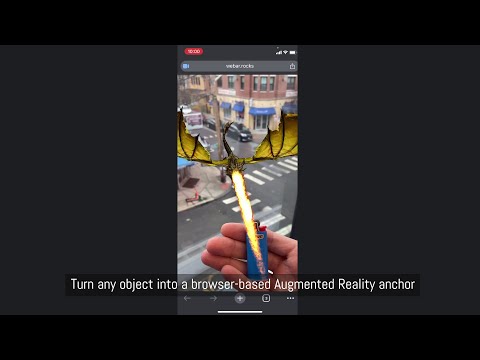

Better than a long explanation, a short video:

Do you have a lighter? Let the dragon light it on our live demo.

Introduction

This repository hosts a full Integrated Training Environment. You can train your own neural network models directly in the web browser and interactively monitor the training process.

The main use case is object detection and tracking and pose estimation: you can train a neural network model to detect and track a real-world object (for example, a lighter) with 6 Degrees of Freedom.. Once trained, this model can be used with WebAR.rocks.object to create a web-based augmented reality application. For instance, you could have a genie pop out of the lighter in augmented reality, as if it were a magic lamp.

This software is fully standalone. It does not require any third party machine learning framework (Google TensorFlow, Torch, Keras, etc.).

Table of Contents

Features

- Neural network training for:

- Object detection, tracking, and 6DoF pose estimation (models can be used in WebAR.rocks.object)

- Image classification (for debugging and testing purposes)

- Live monitoring of the training process through a web interface

- Export trained neural networks as JSON files

- Web Augmented reality object detection and tracking features:

- 6 DoF pose estimation,

- multiple objects can be detected simultaneously (but as soon as one object is detected then it switches to the tracking mode and only the detected object is tracked),

- possibility of using the device IMU to improve the precision of the rotation.

Architecture

/css/: Styles for the integrated training environment/images/: Images used in the integrated training environment/libs/: Third-party libraries/src/: Source code/glsl/: Shader code/js/: JavaScript code/preprocessing/: Image preprocessing modules/problemProviders/: Problem providers (e.g., object detection or image classification)/share/: Contains the GL debugger/trainer/: Main directory for the integrated training environment/core/: Core object model (trainer, neural network, and subcomponents)/imageProcessing/: Image transformations for pre- and post-problem provider execution/UI/: User interface components/webgl/: Wrappers for WebGL objects

/trainingData/: Data used for neural network training/images/: Image-based datasets/models3D/: 3D object datasets

/trainingScripts/: Scripts used to train neural networks within the integrated environment/tutorials/: Tutorials to help you get started/players/: Web application demos using trained neural networks/webar-rocks-object-boilerplate/: React + Vite web application boilerplate with a lighter/webar-rocks-object-lighter-dragon/: React + Vite web application displaying a dragon lighting a lighter

/trainer.html: The integrated training environment

Philosophy

In the Web Browser

Training neural networks on GPUs in the browser may seem unconventional, but it has several advantages:

- No installation required (no CUDA or similar dependencies). Maintenance is minimal, and cross-platform compatibility is guaranteed.

- The training runs on the GPU, so performance is not hindered by JavaScript’s overhead.

- It uses WebGL, which is well-optimized for gaming GPUs.

- A built-in interface helps you monitor and visualize the training process, including textures (all data is stored in textures).

Using Synthetic Data

Object detection and tracking are performed using synthetic data generated in real time with THREE.js from 3D models. This approach offers significant benefits:

- Acquiring a 3D model is typically faster and cheaper than collecting thousands of annotated images.

- It reduces the risk of overfitting on real-world images.

- Lower memory requirements make it feasible to train on mid-range gaming GPUs.

- It eliminates manual annotation errors.

- Changes to training conditions (e.g., lighting) require only script modifications rather than collecting a new dataset.

Only on the GPU

What happens on the GPU stays on the GPU.

WebGL provides direct access to powerful GPU capabilities in the browser, while JavaScript itself can be relatively slow. Data transfers between the GPU and CPU are also slow, so it is crucial to keep as much work as possible on the GPU.

A single training iteration for object detection and tracking includes:

- Generating a sample image from the 3D model using THREE.js

- Performing a forward pass (feedforward) through the neural network

- Performing a backward pass (backpropagation) to compute gradients

- Updating network parameters (weights, biases)

All these steps run entirely on the GPU. This requires:

- A shared WebGL context between THREE.js and the training engine (requiring some modifications to THREE.js).

- In most training iterations, no results are read by the CPU. A sequence of such iterations is called a

minibatch.

With a medium range gaming GPU, we can run about 500 training cycles per second. The GPU usage should be close to 100%.

Workflow

- Open the integrated training environment web application.

- Open and edit the training script. This script will:

- Instantiate a neural network with a specific architecture.

- Instantiate a problem using a problem provider (e.g., object detection or image classification) and load relevant data.

- Instantiate a trainer to train the neural network to solve the problem.

- Start the training.

- Run the training script. The trainer will load the data and train the neural network, with progress displayed in real time.

- Once the training is done (the learning curve flattens), you save the neural network as a JSON file.

- You use the neural network with WebAR.rocks.object or with a boilerplate provided in the /players directory.

WebAR.rocks Services

Contact us at contact__at__webar.rocks if you need:

- Support

- Neural network training

- Optimization or consulting for your projects

- Integration support or development services for applications using trained models

- Neural network quantization (reducing model size by a factor of ~10)

- Consultation on your workflow

- Deployment of this software to AWS EC2 or building an optimized training setup

We have already trained networks to detect and track:

- A coin

- Cans (Sprite, Coke, Red Bull)

- Food items (Ferrero Rocher, Cheerios box)

- Stone sculptures (a bird bath)

- Miscellaneous objects (keyboard, cup)

Documentation

We strongly recommend following this tutorial before going further: WebAR Application Tutorial: A Dragon Lights a Lighter.

Quick Start

-

Clone this GitHub repository.

-

Start a static web server (assuming

pythonis installed):python -m SimpleHTTPServer -

Open the following URL in your web browser: http://localhost:8000/trainer.html?code=ImageMNIST_0.js.

You should see the integrated training interface with the script located at /trainingScripts/ImageMNIST_0.js. Click RUN in the CONTROLS section to start the training. This script trains a model to classify the MNIST dataset (handwritten digits). The model learns to distinguish digits 0 through 9.

Switch to the LIVE GRAPH VIEW tab (under MONITORING) to watch the training progress in real time. The expected output digit is shown in red, and the bars on the chart represent the network’s current predictions.

Compatibility

WebAR.rocks.train uses WebGL1 and requires the following widely supported extensions:

OES_texture_float, texture_float_linear, WEBGL_color_buffer_float.

You can check your available WebGL extensions at webglreport.com.

The interface is designed for desktop use. We strongly recommend using a dedicated desktop computer with an NVIDIA GeForce GPU. Laptops or mobile devices are generally not suited for continuous, intensive GPU usage.

The players and other WebAR.rocks libraries using trained neural network models for real-time inference only don't have this strong compatibility constraints. They implement alternative execution paths (using WebGL2 or handling floating point linear texture filtering with specific shaders) to work on any devices including low-end mobile devices.

The Training Script

A