ShinkaEvolve

ShinkaEvolve: Towards Open-Ended and Sample-Efficient Program Evolution 🧬

Install / Use

/learn @SakanaAI/ShinkaEvolveREADME

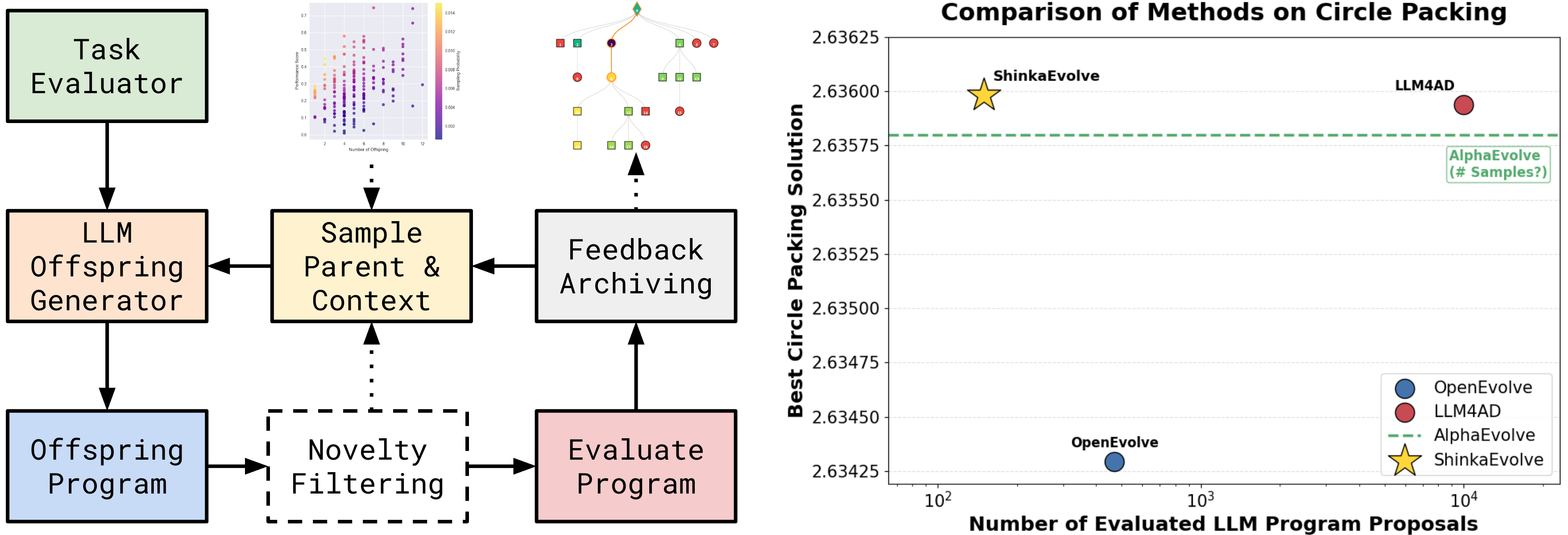

shinka is a framework that combines Large Language Models (LLMs) with evolutionary algorithms to drive scientific discovery. By leveraging the creative capabilities of LLMs and the optimization power of evolutionary search, shinka enables automated exploration and improvement of scientific code. The system is inspired by the AI Scientist, AlphaEvolve and the Darwin Goedel Machine: It maintains a population of programs that evolve over generations, with an ensemble of LLMs acting as intelligent mutation operators that suggest code improvements.

Mar 2026 Update: Refactored API and unified runner ShinkaEvolveRunner (replacing EvolutionRunner and AsyncEvolutionRunner). You can now install shinka via PyPI and uv: pip install shinka-evolve.

Feb 2026 Update: Added agent skills for using shinka within coding agents (Claude Code, Codex, etc.) for new task generation (shinka-setup), converting your repo (shinka-convert), evolution (shinka-run), and result inspection (shinka-inspect). Install them via npx:

npx skills add SakanaAI/ShinkaEvolve --skill '*' -a claude-code -a codex -y

Jan 2026 Update: ShinkaEvolve was accepted at ICLR 2026 and we released an update with new features.

Nov 2025 Update: Rob gave several public talks about our ShinkaEvolve effort (Official, AutoML Seminar).

Oct 2025 Update ShinkaEvolve supported Team Unagi in winning the ICFP 2025 Programming Contest.

The framework supports parallel evaluation of candidates locally or on a Slurm cluster. It maintains an archive of successful solutions, enabling knowledge transfer between different evolutionary islands. shinka is particularly well-suited for scientific tasks where there is a verifier available and the goal is to optimize performance metrics while maintaining code correctness and readability.

Documentation 📝

| Guide | Description | What You'll Learn | |-------|-------------|-------------------| | 🚀 First steps | Installation, basic usage, and examples | Setup, first evolution run, core concepts | | 📓 Tutorial | Interactive walkthrough of Shinka | Hands-on examples, config, best practices | | ⚙️ Config | Comprehensive config reference | All config options & advanced features | | 🎨 WebUI | Interactive visualization and monitoring | Real-time tracking, result analysis, debugging | | ⚡ Async Evo | High-perf. throughput (5-10x speedup) | Concurrent processing, proposal/eval tuning | | 🧠 Local Models | How to use local LLMs and embeddings with Shinka | Running open-source models & integration tips | | 🤖 Agentic Use | Run Shinka with Claude/Codex skills | CLI install, skill placement, setup/run workflows |

Installation & Quick Start 🚀

# Install from PyPI

pip install shinka-evolve

# Or with uv

uv pip install shinka-evolve

# Run your first evolution experiment

shinka_launch variant=circle_packing_example

The distribution name is shinka-evolve; Python imports stay import shinka.

shinka_launch still supports the original shorthand group overrides:

shinka_launch variant=circle_packing_example

shinka_launch task=novelty_generator database=island_small

Built-in Hydra presets ship inside the package under shinka/configs/. To add your own presets from a PyPI install without cloning the repo, place them in your own config directory and pass --config-dir:

mkdir -p ~/my-shinka-configs/variant

$EDITOR ~/my-shinka-configs/variant/my_variant.yaml

shinka_launch --config-dir ~/my-shinka-configs variant=my_variant

For development installs from source:

git clone https://github.com/SakanaAI/ShinkaEvolve

cd ShinkaEvolve

uv venv --python 3.11

source .venv/bin/activate # On Windows: .venv\Scripts\activate

uv pip install -e .

For detailed installation instructions and usage examples, see the Getting Started Guide.

Examples 📖

| Example | Description | Environment Setup |

|---------|-------------|-------------------|

| ⭕ Circle Packing | Optimize circle packing to maximize radii. | LocalJobConfig |

| 🎮 Game 2048 | Optimize a policy for the Game of 2048. | LocalJobConfig |

| ∑ Julia Prime Counting | Optimize a Julia solver for prime-count queries. | LocalJobConfig |

| ✨ Novelty Generator | Generate creative, surprising outputs (e.g., ASCII art). | LocalJobConfig |

shinka Run with Python API 🐍

For the simplest setup with default settings, you only need to specify the evaluation program:

from shinka.core import ShinkaEvolveRunner, EvolutionConfig

from shinka.database import DatabaseConfig

from shinka.launch import LocalJobConfig, SlurmCondaJobConfig, SlurmDockerJobConfig

# Minimal - only specify what's required

job_conf = LocalJobConfig(eval_program_path="evaluate.py")

# Or source a uv/venv environment per job:

# job_conf = LocalJobConfig(

# eval_program_path="evaluate.py",

# activate_script=".venv/bin/activate",

# )

# Or run evaluations on SLURM:

# job_conf = SlurmCondaJobConfig(

# eval_program_path="evaluate.py",

# partition="gpu",

# time="01:00:00",

# cpus=1,

# gpus=1,

# mem="8G",

# conda_env="shinka",

# )

# Or run evaluations in a Docker container on SLURM:

# job_conf = SlurmDockerJobConfig(

# eval_program_path="evaluate.py",

# image="ubuntu:latest",

# partition="gpu",

# time="01:00:00",

# cpus=1,

# gpus=1,

# mem="8G",

# )

db_conf = DatabaseConfig()

evo_conf = EvolutionConfig(init_program_path="initial.py")

runner = ShinkaEvolveRunner(

evo_config=evo_conf,

job_config=job_conf,

db_config=db_conf,

max_evaluation_jobs=2,

max_proposal_jobs=3, # modest oversubscription when proposal generation is slower than eval

max_db_workers=4,

)

runner.run()

Class defaults below come from shinka/core/config.py (EvolutionConfig). Hydra presets and CLI overrides can replace these values. Concurrency lives on ShinkaEvolveRunner via max_evaluation_jobs, max_proposal_jobs, and max_db_workers.

| Key | Default Value | Type | Explanation |

|-----|---------------|------|-------------|

| task_sys_msg | "You are an expert optimization and algorithm design assistant. Improve the program while preserving correctness and immutable regions." | Optional[str] | System message describing the optimization task |

| patch_types | ["diff", "full", "cross"] | List[str] | Types of patches to generate: "diff", "full", "cross" |

| patch_type_probs | [0.6, 0.3, 0.1] | List[float] | Probabilities for each patch type |

| num_generations | 50 | int | Number of evolution generations to run |

| max_patch_resamples | 3 | int | Max times to resample a patch if it fails |

| max_patch_attempts | 1 | `int

Related Skills

node-connect

339.3kDiagnose OpenClaw node connection and pairing failures for Android, iOS, and macOS companion apps

frontend-design

83.9kCreate distinctive, production-grade frontend interfaces with high design quality. Use this skill when the user asks to build web components, pages, or applications. Generates creative, polished code that avoids generic AI aesthetics.

openai-whisper-api

339.3kTranscribe audio via OpenAI Audio Transcriptions API (Whisper).

commit-push-pr

83.9kCommit, push, and open a PR