DiffusionSfM

[CVPR 2025] "DiffusionSfM: Predicting Structure and Motion via Ray Origin and Endpoint Diffusion" official implementation.

Install / Use

/learn @QitaoZhao/DiffusionSfMREADME

DiffusionSfM

This repository contains the official implementation for DiffusionSfM: Predicting Structure and Motion via Ray Origin and Endpoint Diffusion. The paper has been accepted to CVPR 2025.

Project Page | arXiv | <a href='https://huggingface.co/spaces/qitaoz/DiffusionSfM'><img src='https://img.shields.io/badge/%F0%9F%A4%97%20Hugging%20Face-Demo-blue'></a>

News

- 2025.05.04: Initial code release.

Introduction

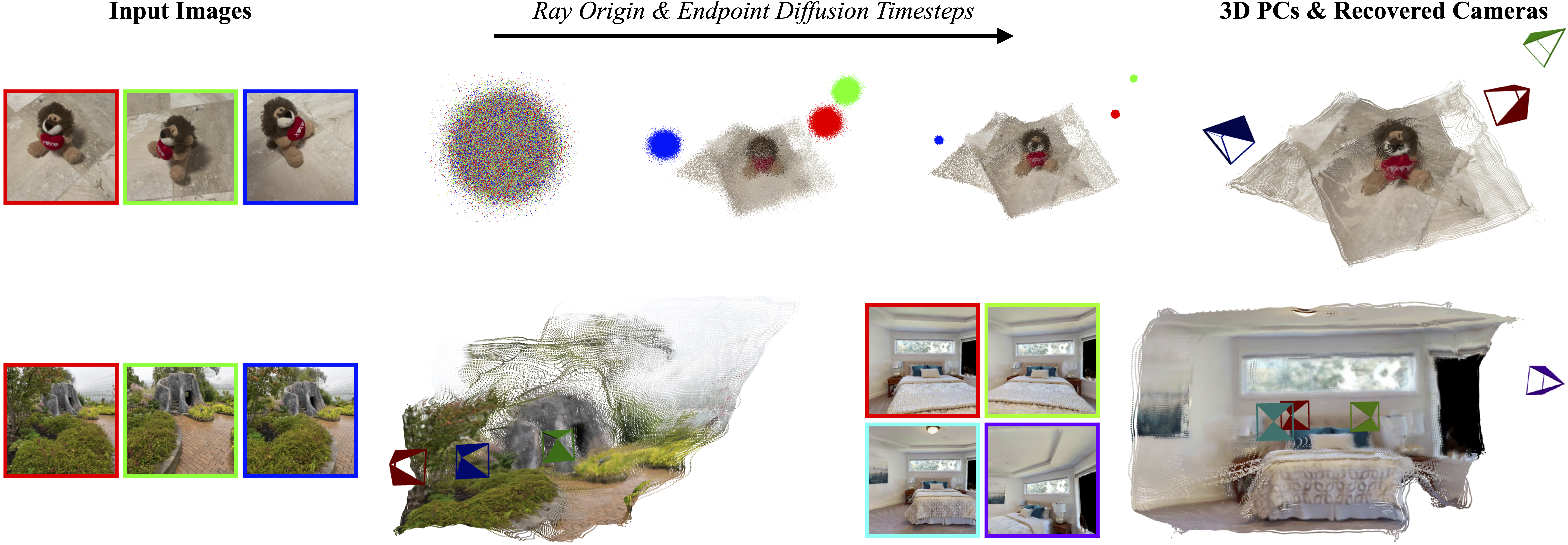

tl;dr Given a set of multi-view images, DiffusionSfM represents scene geometry and cameras as pixel-wise ray origins and endpoints in a global frame. It learns a denoising diffusion model to infer these elements directly from multi-view inputs.

Install

- Clone DiffusionSfM:

git clone https://github.com/QitaoZhao/DiffusionSfM.git

cd DiffusionSfM

- Create the environment and install packages:

conda create -n diffusionsfm python=3.9

conda activate diffusionsfm

# enable nvcc

conda install -c conda-forge cudatoolkit-dev

### torch

# CUDA 11.7

conda install pytorch==2.0.1 torchvision==0.15.2 torchaudio==2.0.2 pytorch-cuda=11.7 -c pytorch -c nvidia

pip install -r requirements.txt

### pytorch3D

# CUDA 11.7

conda install https://anaconda.org/pytorch3d/pytorch3d/0.7.7/download/linux-64/pytorch3d-0.7.7-py39_cu117_pyt201.tar.bz2

# xformers

conda install xformers -c xformers

Tested on:

- Springdale Linux 8.6 with torch 2.0.1 & CUDA 11.7 on A6000 GPUs.

Note: If you encounter the error

ImportError: .../libtorch_cpu.so: undefined symbol: iJIT_NotifyEvent

when importing PyTorch, refer to this related issue or try installing Intel MKL explicitly with:

conda install mkl==2024.0

Run Demo

(1) Try the Online Demo

Check out our interactive demo on Hugging Face:

(2) Run the Gradio Demo Locally

Download the model weights manually from Hugging Face:

from huggingface_hub import hf_hub_download

filepath = hf_hub_download(repo_id="qitaoz/DiffusionSfM", filename="qitaoz/DiffusionSfM")

or Google Drive:

gdown https://drive.google.com/uc\?id\=15nCFDLtpS0GC3QUo81RPmAxB4EQv7S8q

unzip models.zip

Next run the demo like so:

# first-time running may take a longer time

python gradio_app.py

You can run our model in two ways:

- Upload Images — Upload your own multi-view images above.

- Use a Preprocessed Example — Select one of the pre-collected examples below.

Training

Set up wandb:

wandb login

See docs/train.md for more detailed instructions on training.

Evaluation

See docs/eval.md for instructions on how to run evaluation code.

Acknowledgments

This project builds upon RayDiffusion. Amy Lin and Jason Y. Zhang developed the initial codebase during the early stages of this project.

Cite DiffusionSfM

If you find this code helpful, please cite:

@inproceedings{zhao2025diffusionsfm,

title={DiffusionSfM: Predicting Structure and Motion via Ray Origin and Endpoint Diffusion},

author={Qitao Zhao and Amy Lin and Jeff Tan and Jason Y. Zhang and Deva Ramanan and Shubham Tulsiani},

booktitle={CVPR},

year={2025}

}

Related Skills

node-connect

335.4kDiagnose OpenClaw node connection and pairing failures for Android, iOS, and macOS companion apps

frontend-design

82.5kCreate distinctive, production-grade frontend interfaces with high design quality. Use this skill when the user asks to build web components, pages, or applications. Generates creative, polished code that avoids generic AI aesthetics.

openai-whisper-api

335.4kTranscribe audio via OpenAI Audio Transcriptions API (Whisper).

commit-push-pr

82.5kCommit, push, and open a PR