VILA

VILA is a family of state-of-the-art vision language models (VLMs) for diverse multimodal AI tasks across the edge, data center, and cloud.

Install / Use

/learn @NVlabs/VILAREADME

VILA: Optimized Vision Language Models

arXiv / Demo / Models / Subscribe

💡 Introduction

VILA is a family of open VLMs designed to optimize both efficiency and accuracy for efficient video understanding and multi-image understanding.

💡 News

- [2025/7] We release OmniVinci, a state-of-the-art visual-audio joint understanding omni-modal LLM built upon VILA codebase!

- [2025/7] We release Long-RL that supports RL training on VILA/LongVILA/NVILA models with long videos.

- [2025/6] We release PS3 and VILA-HD. PS3 is a vision encoder that scales up vision pre-training to 4K resolution. VILA-HD is VILA with PS3 as the vision encoder and shows superior performance and efficiency in understanding high-resolution detail-rich images.

- [2025/1] As of January 6, 2025 VILA is now part of the new Cosmos Nemotron vision language models.

- [2024/12] We release NVILA (a.k.a VILA2.0) that explores the full stack efficiency of multi-modal design, achieving cheaper training, faster deployment and better performance.

- [2024/12] We release LongVILA that supports long video understanding, with long-context VLM with more than 1M context length and multi-modal sequence parallel system.

- [2024/10] VILA-M3, a SOTA medical VLM finetuned on VILA1.5 is released! VILA-M3 significantly outperforms Llava-Med and on par w/ Med-Gemini and is fully opensourced! code model

- [2024/10] We release VILA-U: a Unified foundation model that integrates Video, Image, Language understanding and generation.

- [2024/07] VILA1.5 also ranks 1st place (OSS model) on MLVU test leaderboard.

- [2024/06] VILA1.5 is now the best open sourced VLM on MMMU leaderboard and Video-MME leaderboard!

- [2024/05] We release VILA-1.5, which offers video understanding capability. VILA-1.5 comes with four model sizes: 3B/8B/13B/40B.

- [2024/05] We release AWQ-quantized 4bit VILA-1.5 models. VILA-1.5 is efficiently deployable on diverse NVIDIA GPUs (A100, 4090, 4070 Laptop, Orin, Orin Nano) by TinyChat and TensorRT-LLM backends.

- [2024/03] VILA has been accepted by CVPR 2024!

- [2024/02] We release AWQ-quantized 4bit VILA models, deployable on Jetson Orin and laptops through TinyChat and TinyChatEngine.

- [2024/02] VILA is released. We propose interleaved image-text pretraining that enables multi-image VLM. VILA comes with impressive in-context learning capabilities. We open source everything: including training code, evaluation code, datasets, model ckpts.

- [2023/12] Paper is on Arxiv!

Performance

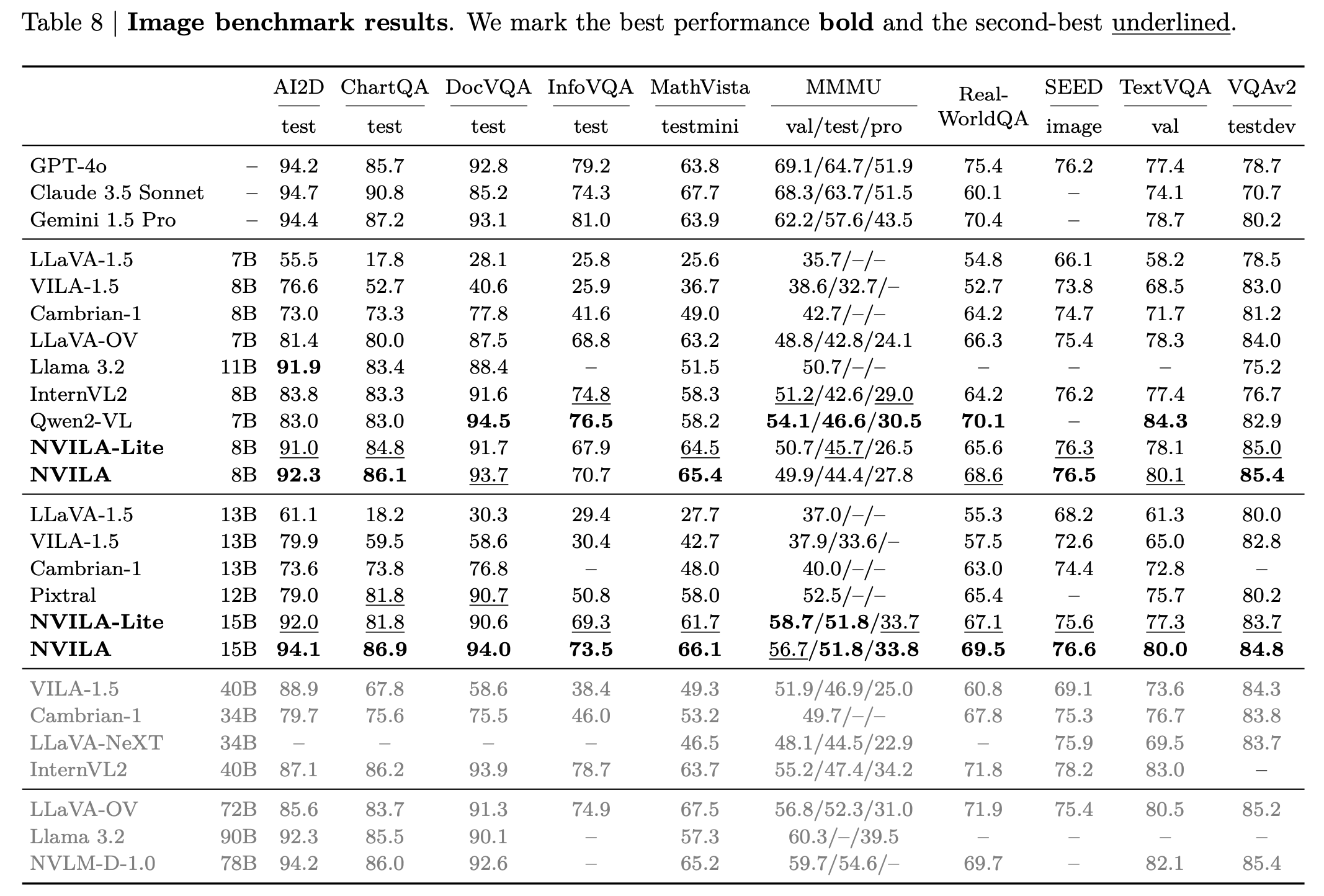

Image Benchmarks

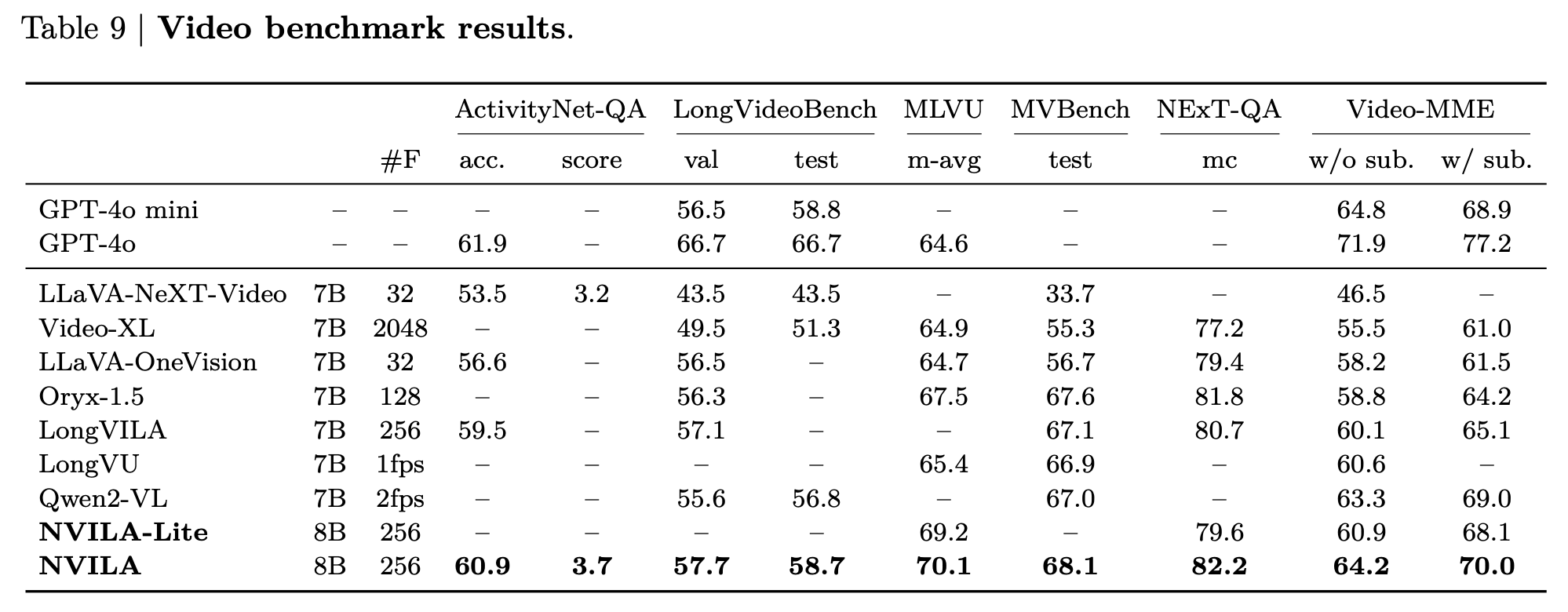

Video Benchmarks

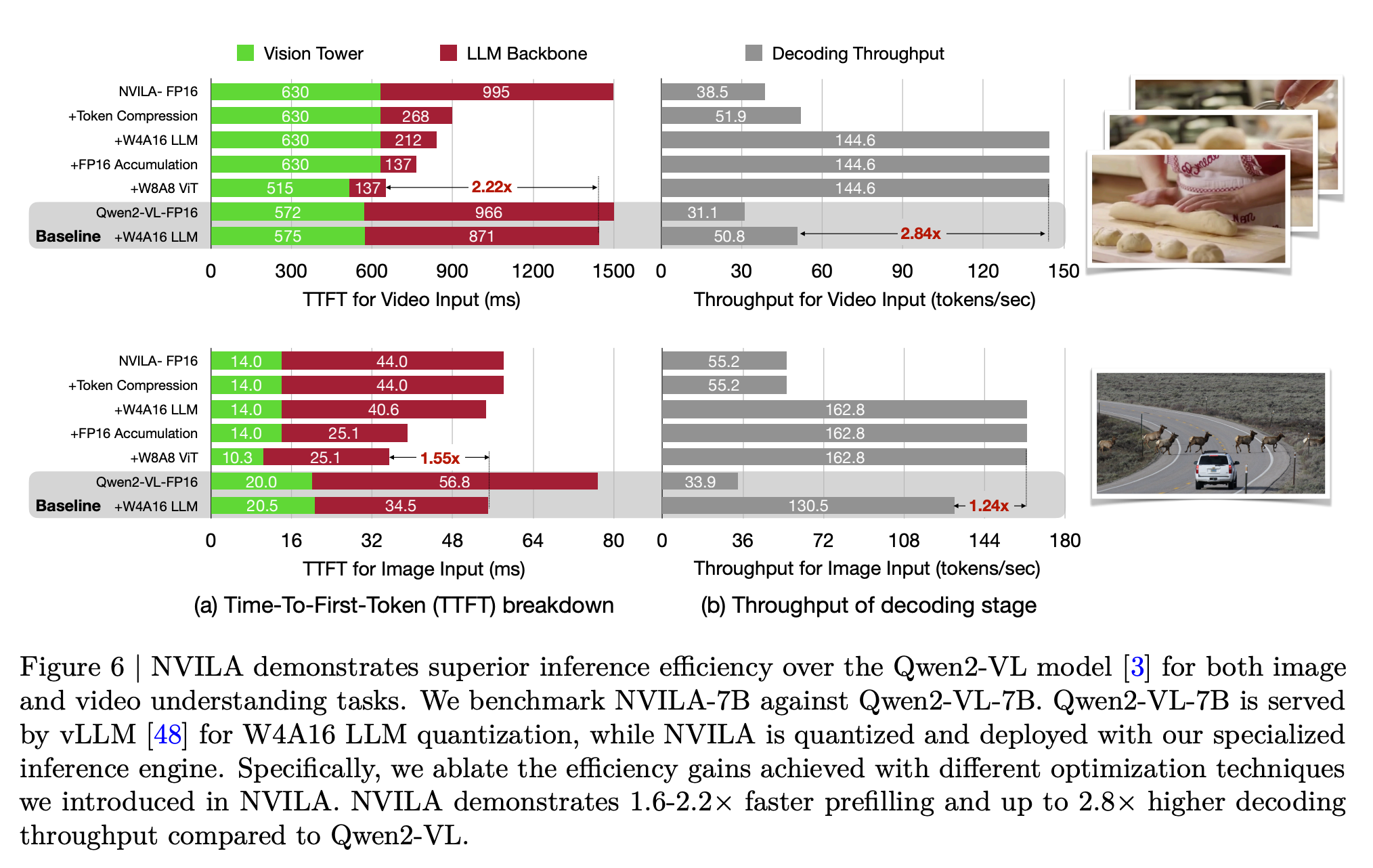

Efficient Deployments

<sup>NOTE: Measured using the TinyChat backend at batch size = 1.</sup>

Inference Performance

Decoding Throughput ( Token/sec )

| $~~~~~~$ | A100 | 4090 | Orin | | --------------------------- | ----- | ----- | ---- | | NVILA-3B-Baseline | 140.6 | 190.5 | 42.7 | | NVILA-3B-TinyChat | 184.3 | 230.5 | 45.0 | | NVILA-Lite-3B-Baseline | 142.3 | 190.0 | 41.3 | | NVILA-Lite-3B-TinyChat | 186.0 | 233.9 | 44.9 | | NVILA-8B-Baseline | 82.1 | 61.9 | 11.6 | | NVILA-8B-TinyChat | 186.8 | 162.7 | 28.1 | | NVILA-Lite-8B-Baseline | 84.0 | 62.0 | 11.6 | | NVILA-Lite-8B-TinyChat | 181.8 | 167.5 | 32.8 | | NVILA-Video-8B-Baseline * | 73.2 | 58.4 | 10.9 | | NVILA-Video-8B-TinyChat * | 151.8 | 145.0 | 32.3 |

TTFT (Time-To-First-Token) ( Sec )

| $~~~~~~$ | A100 | 4090 | Orin | | --------------------------- | ------ | ------ | ------ | | NVILA-3B-Baseline | 0.0329 | 0.0269 | 0.1173 | | NVILA-3B-TinyChat | 0.0260 | 0.0188 | 0.1359 | | NVILA-Lite-3B-Baseline | 0.0318 | 0.0274 | 0.1195 | | NVILA-Lite-3B-TinyChat | 0.0314 | 0.0191 | 0.1241 | | NVILA-8B-Baseline | 0.0434 | 0.0573 | 0.4222 | | NVILA-8B-TinyChat | 0.0452 | 0.0356 | 0.2748 | | NVILA-Lite-8B-Baseline | 0.0446 | 0.0458 | 0.2507 | | NVILA-Lite-8B-TinyChat | 0.0391 | 0.0297 | 0.2097 | | NVILA-Video-8B-Baseline * | 0.7190 | 0.8840 | 5.8236 | | NVILA-Video-8B-TinyChat * | 0.6692 | 0.6815 | 5.8425 |

<sup>NOTE: Measured using the TinyChat backend at batch size = 1, dynamic_s2 disabled, and num_video_frames = 64. We use W4A16 LLM and W8A8 Vision Tower for Tinychat and the baseline precision is FP16.</sup> <sup>*: Measured with video captioning task. Otherwise, measured with image captioning task.</sup>

VILA Examples

Video captioning

https://github.com/Efficient-Large-Model/VILA/assets/156256291/c9520943-2478-4f97-bc95-121d625018a6

Prompt: Elaborate on the visual and narrative elements of the video in detail.

Caption: The video shows a person's hands working on a white surface. They are folding a piece of fabric with a checkered pattern in shades of blue and white. The fabric is being folded into a smaller, more compact shape. The person's fingernails are painted red, and they are wearing a black and red garment. There are also a ruler and a pencil on the surface, suggesting that measurements and precision are involved in the process.

In context learning

<img src="demo_images/demo_img_1.png" height="239"> <img src="demo_images/demo_img_2.png" height="250">Multi-image reasoning

<img src="demo_images/demo_img_3.png" height="193">VILA on Jetson Orin

https://github.com/Efficient-Large-Model/VILA/assets/7783214/6079374c-0787-4bc4-b9c6-e1524b4c9dc4

VILA on RTX 4090

https://github.com/Efficient-Large-Model/VILA/assets/7783214/80c47742-e873-4080-ad7d-d17c4700539f

Installation

-

Install Anaconda Distribution.

-

Install the necessary Python packages in the environment.

./environment_setup.sh vila -

(Optional) If you are an NVIDIA employee with a wandb account, install onelogger and enable it by setting

training_args.use_one_loggertoTrueinllava/train/args.py.pip install --index-url=https://sc-hw-artf.nvidia.com/artifactory/api/pypi/hwinf-mlwfo-pypi/simple --upgrade one-logger-utils -

Activate a conda environment.

conda activate vila

Training

VILA training contains three steps, for specific hyperparameters, please check out the scripts/NVILA-Lite folder:

Step-1: Alignment

We utilize LLaVA-CC3M-Pretrain-595K dataset to align the textual and visual modalities.

The stage 1 script takes in two parameters and it can run on a single 8xA100 node.

bash scripts/NVILA-Lite/align.sh Efficient-Large-Model/Qwen2-VL-7B-Instruct <alias to data>

and the trained models will be saved to runs/train/nvila-8b-align.

Step-1.5:

bash scripts/NVILA-Lite/stage15.sh runs/train/nvila-8b-align/model <alias to data>

and the trained models will be saved to runs/train/nvila-8b-align-1.5.

Step-2: Pretraining

We use MMC4 and Coyo dataset to train VLM with interleaved image-text pairs.

bash scripts/NVILA-Lite/pretrain.sh runs/train/nvila-8b-align-1.5 <alias to data>

and the trained models will be saved to runs/train/nvila-8b-pretraining.

Step-3: Supervised fine-tuning

This is the last stage of VILA training, in which we tune the model to follow multimodal instructions on a subset of M3IT, FLAN and ShareGPT4V. This stage runs on a 8xA100 node.

bash scripts/NVILA-Lite/sft.sh runs/train/nvila-8b-pretraining <alias to data>

and the trained models will be saved to runs/train/nvila-8b-SFT.

Evaluations

We have introduce vila-eval command to simplify the evaluation. Once the data is prepared, the evaluation can be launched via

MODEL_NAME=NVILA-15B

MODEL_ID=Efficient-Large-Model/$MODEL_NAME

huggingface-cli download $MODEL_ID

vila-eval \

--model-name $MODEL_NAME \

--model-path $MODEL_ID \

--conv-mode auto \

--tags-include local

it will launch all evaluations and return a summarized result.

Inference

We provide vila-infer for quick inference with user prompts and images.

# image description

vila-infer \

--model-path Efficient-Large-Model/NVILA-15B \

--conv-mode auto \

--text "Please describe the image" \

--media demo_images/demo_img.png

# video description

vila-infer \

--model-path Efficient-Large-Model/NVILA-15B \

Related Skills

node-connect

334.5kDiagnose OpenClaw node connection and pairing failures for Android, iOS, and macOS companion apps

frontend-design

82.2kCreate distinctive, production-grade frontend interfaces with high design quality. Use this skill when the user asks to build web components, pages, or applications. Generates creative, polished code that avoids generic AI aesthetics.

openai-whisper-api

334.5kTranscribe audio via OpenAI Audio Transcriptions API (Whisper).

commit-push-pr

82.2kCommit, push, and open a PR