G2p

Grapheme-to-Phoneme transductions that preserve input and output indices, and support cross-lingual g2p!

Install / Use

/learn @NRC-ILT/G2pREADME

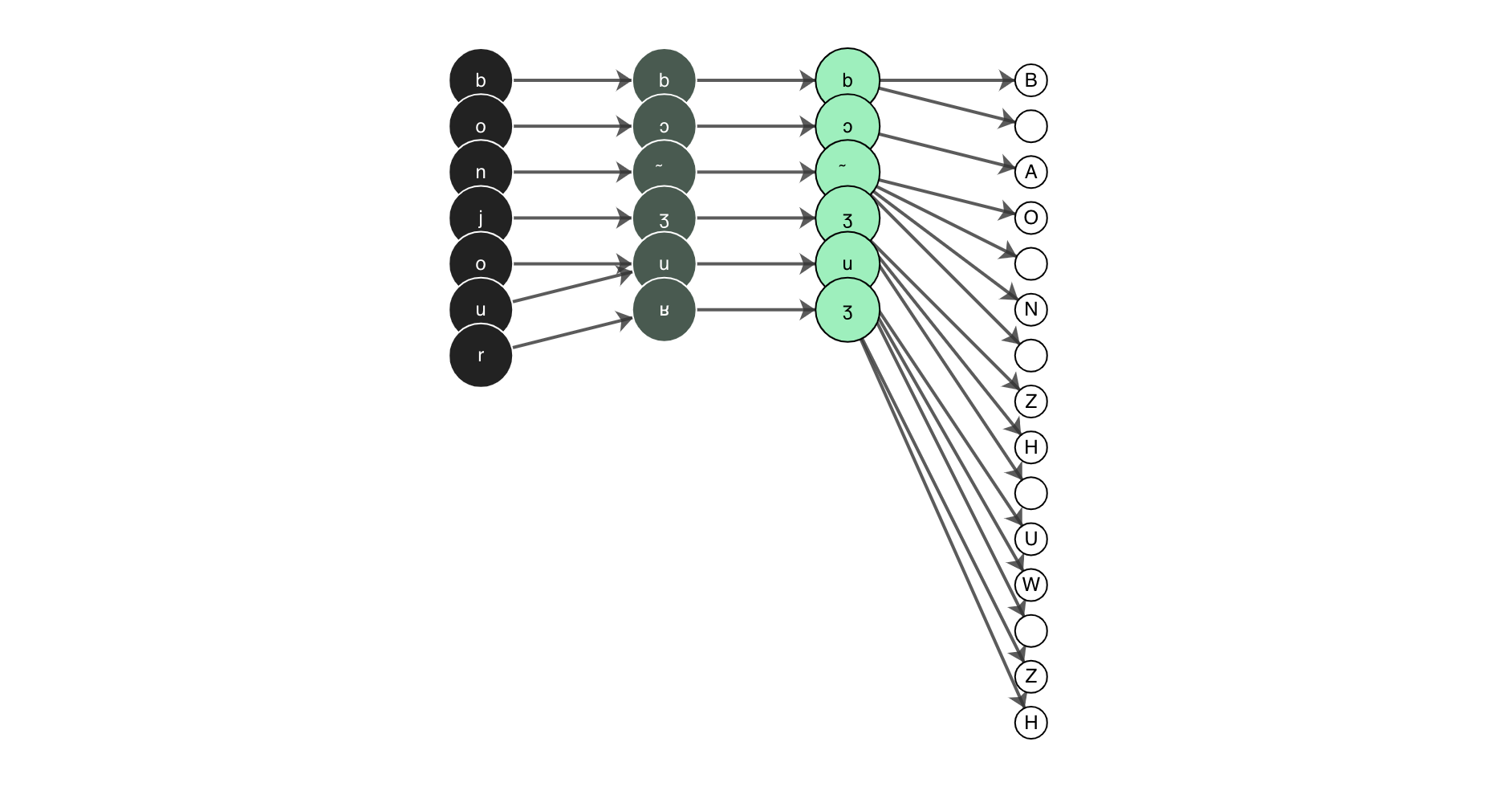

Gᵢ2Pᵢ

Grapheme-to-Phoneme transformations that preserve input and output indices!

This library is for handling arbitrary conversions between input and output segments while preserving indices.

Table of Contents

See also:

Background

The initial version of this package was developed by Patrick Littell and was developed in order to allow for g2p from community orthographies to IPA and back again in ReadAlong-Studio. We decided to then pull out the g2p mechanism from Convertextract which allows transducer relations to be declared in CSV files, and turn it into its own library - here it is! For an in-depth series on the motivation and how to use this tool, have a look at this 7-part series on the Mother Tongues Blog, or for a more technical overview, have a look at this paper.

Install

The best thing to do is install with pip pip install g2p or pip install "g2p[neural]" to install the optional neural dependencies.

This command will install the latest release published on PyPI g2p releases.

You can also use hatch (see hatch installation instructions) to set up an isolated local development environment, which may be useful if you wish to contribute new mappings:

$ git clone https://github.com/nrc-ilt/g2p.git

$ cd g2p

$ hatch shell

You can also simply install an "editable" version with pip (but it

is recommended to do this in a virtual

environment or a conda

environment):

$ git clone https://github.com/nrc-ilt/g2p.git

$ cd g2p

$ pip install -e .

Usage

The easiest way to create a transducer is to use the g2p.make_g2p function.

To use it, first import the function:

from g2p import make_g2p

Then, call it with an argument for in_lang and out_lang. Both must be strings equal to the name of a particular mapping.

>>> transducer = make_g2p('dan', 'eng-arpabet')

>>> transducer('hej').output_string

'HH EH Y'

There must be a valid path between the in_lang and out_lang in order for this to work. If you've edited a mapping or added a custom mapping, you must update g2p to include it: g2p update

Writing mapping files

Mapping files are written as either CSV or JSON files.

CSV

CSV files write each new rule as a new line and consist of at least two columns, and up to four. The first column is required and corresponds to the rule's input. The second column is also required and corresponds to the rule's output. The third column is optional and corresponds to the context before the rule input. The fourth column is also optional and corresponds to the context after the rule input. For example:

- This mapping describes two rules; a -> b and c -> d.

a,b

c,d

- This mapping describes two rules; a -> b / c _ d<sup id="a1">1</sup> and a -> e

a,b,c,d

a,e

The g2p studio exports its rules to CSV format.

JSON

JSON files are written as an array of objects where each object corresponds to a new rule. The following two examples illustrate how the examples from the CSV section above would be written in JSON:

- This mapping describes two rules; a -> b and c -> d.

[

{

"in": "a",

"out": "b"

},

{

"in": "c",

"out": "d"

}

]

- This mapping describes two rules; a -> b / c _ d<sup id="a1">1</sup> and a -> e

[

{

"in": "a",

"out": "b",

"context_before": "c",

"context_after": "d"

},

{

"in": "a",

"out": "e"

}

]

Python

You can also write your rules programatically in Python. For example:

from g2p.mappings import Mapping, Rule

from g2p.transducer import Transducer

mapping = Mapping(rules=[

Rule(rule_input="a", rule_output="b", context_before="c", context_after="d"),

Rule(rule_input="a", rule_output="e")

])

transducer = Transducer(mapping)

transducer('cad') # returns "cbd"

Neural G2P models

The main functionality of this library is to provide lightweight, index-preserving g2p for many languages. However, we also support some neural g2p models. These can be accessed by first installing the necessary neural packages with pip install "g2p[neural]". Then, when creating a g2p object, add the neural flag like so:

>>> neural_transducer = make_g2p('str', 'str-ipa', neural=True)

>>> transducer('SENĆOŦEN').output_string

'sənt͡ʃáθən'

Note: neural models are not yet accessible via our API or the G2P Studio.

CLI

update

If you edit or add new mappings to the g2p.mappings.langs folder, you need to update g2p. You do this by running g2p update

convert

If you want to convert a string on the command line, you can use g2p convert <input_text> <in_lang> <out_lang>

Ex. g2p convert hej dan eng-arpabet would produce HH EH Y

If you have written your own mapping that is not included in the standard g2p library, you can point to its configuration file using the --config flag, as in g2p convert <input_text> <in_lang> <out_lang> --config path/to/config.yml. This will add the mappings defined in your configuration to the existing g2p network, so be careful to avoid namespace errors.

generate-mapping

If your language has a mapping to IPA and you want to generate a mapping between that and the English IPA mapping, you can use g2p generate-mapping <in_lang> --ipa. Remember to run g2p update before so that it has the latest mappings for your language.

Ex. g2p generate-mapping dan --ipa will produce a mapping from dan-ipa to eng-ipa. You must also run g2p update afterwards to update g2p. The resulting mapping will be added to the folder in g2p.mappings.langs.generated

Note: if your language goes through an intermediate representation, e.g., lang -> lang-equiv -> lang-ipa, specify both the <in_lang> and <out_lang> of your final IPA mapping to g2p generate-mapping. E.g., to generate crl-ipa -> eng-ipa, you would run g2p generate-mapping --ipa crl-equiv crl-ipa.

g2p workflow diagram

The interactions between g2p update and g2p generate-mapping are not fully intuitive, so this diagram should help understand what's going on:

Text DB: this is the textual database of g2p conversion rules created by contributors. It consists of these files:

- g2p/mappings/langs/*/*.csv

- g2p/mappings/langs/*/*.json

- g2p/mappings/langs/*/*.yaml

Gen DB: this is the part of the textual database that is generated when running the g2p generate-mapping command:

- g2p/mappings/generated/*

Compiled DB: this contains the same info as Text DB + Gen DB, but in a format optimized for fast reading by the machine. This is what any program using g2p reads: g2p convert, readalongs align, convertextract, and also g2p generate-mapping. It consists of these files:

- g2p/mappings/langs/langs.json.gz

- g2p/mappings/langs/network.json.gz

- g2p/static/languages-network.json

So, when you write a new g2p mapping for a language, say lll, and you want to be able to convert text from lll to eng-ipa or eng-arpabet, you need to do the following:

- Write the mapping from

llltolll-ipain g2p/mappings/langs/lll/. You've just updated Text DB. - Run

g2p updateto regenerate Compiled DB from the current Text DB and Gen DB, i.e., to incorporate your new mapping rules. - Run

g2p generate-mapping --ipa lllto generate g2p/mappings/langs/generated/lll-ipa_to_eng-ipa.json. This is not based on what you wrote directly, but rather on what's in Generated DB. - Run

g2p updateagain.g2p generate-mappingupdates Gen DB only, so what gets written there will only be reflected in Compiled DB when you rung2p updateonce more.

Once you have the Compiled DB, it is then possible to use the g2p convert command, create time-aligned audiobooks with readalongs align, or convert files with the convertextract library.

Studio

You can also run the g2p Studio which is a web interface for

creating custom lookup tables to be used with g2p. To run the `g2p