PolyphonicPianoTranscription

Recurrent Neural Network for generating piano MIDI-files from audio (MP3, WAV, etc.)

Install / Use

/learn @BShakhovsky/PolyphonicPianoTranscriptionREADME

Automatic Polyphonic Piano Transcription with Recurrent Neural Networks

IPython-notebook templates for neural network training and then using it to generate piano MIDI-files from audio (MP3, WAV, etc.). The accuracy will depend on the complexity of the song, and will obviously be higher for solo piano pieces.

Update (2021 May)

There is another pre-trained Magenta's model in TensorFlow Lite format, it can be downloaded here: https://storage.googleapis.com/magentadata/models/onsets_frames_transcription/tflite/onsets_frames_wavinput.tflite or look for the link in the GitHub-repository: https://github.com/magenta/magenta/tree/master/magenta/models/onsets_frames_transcription/realtime

It takes as input approximately 1 second of raw audio (not 20 seconds of mel spectrogram). There is an example of using the model in my fifth IPython template ("5 TF Lite Inference.ipynb"). This model is super-fast on my Android device and accuracy is still not bad. To see my app for Android 4.4 KitKat (API level 19) or higher, click on the following screenshot:

or get it on Google Play:

The previous full Tensorflow model (not TensorFlow Lite) is used in my app for Windows 7 or later, to see it click on the following screenshot:

Update (2019 June)

There is Google's model called "Onsets & Frames" with very good accuracy, see the following blog post: https://magenta.tensorflow.org/onsets-frames or GitHub-repository: https://github.com/magenta/magenta/tree/master/magenta/models/onsets_frames_transcription

Just for fun, I blindly copied those model parameters, and trained the model in the second IPython template. But my resultant accuracy was slightly less, probably because of the reduced batch size. So, eventually, in the third IPython template, instead of training my own model, I just copied the weights from the Google's pre-trained tensorflow checkpoint: https://storage.googleapis.com/magentadata/models/onsets_frames_transcription/maestro_checkpoint.zip

Troubleshooting

If Python "Librosa" module cannot open any audio format except WAV, download FFmpeg codec:

Choose your Windows architecture and static linking there. And do not forget to add the "PATH" environment variable with the location of your downloaded "ffmpeg.exe". Or see more detailed instructions here:

https://www.wikihow.com/Install-FFmpeg-on-Windows

Dataset: MAESTRO (MIDI and Audio Edited<br>for Synchronous TRacks and Organization)

Downloaded from Google Magenta: https://magenta.tensorflow.org/datasets/maestro#download

Warning

For some samples last midi note onsets are slightly beyond the duration of the corresponding WAV-audio.

Not used datasets

Not used: MAPS (from Fichiers - Aix-Marseille Université)

https://amubox.univ-amu.fr/index.php/s/iNG0xc5Td1Nv4rR

Issue 1 (small dataset and not as natural)

From https://arxiv.org/pdf/1810.12247.pdf, Page 4, Section 3 "Dataset":

MAPS ... “performances” are not as natural as the MAESTRO performances captured from live performances. In addition, synthesized audio makes up a large fraction of the MAPS dataset.

Issue 2 (skipped notes)

From https://arxiv.org/pdf/1710.11153.pdf, Page 6, Section 6 "Need for more data, more rigorous evaluation":

In addition to the small number of the MAPS Disklavier recordings, we have also noticed several cases where the Disklavier appears to skip some notes played at low velocity. For example, at the beginning of the Beethoven Sonata No. 9, 2nd movement, several Ab notes played with MIDI velocities in the mid-20s are clearly missing from the audio...

Issue 3 (two chords instead of one)

There is an issue with datasets "ENSTDkAm" & "ENSTDkCl", subtypes "RAND" & "UCHO". They are assumed to have only one chord per one WAV-file. But sometimes the chord is split into two onset times in corresponding MIDI and TXT-files, and those two onset times fall into two consecutive time-frames of cqt-transform (or mel-transform).

Not used: MusicNET (from University of Washington Computer Science & Engineering)

https://homes.cs.washington.edu/~thickstn/musicnet.html

From https://arxiv.org/pdf/1810.12247.pdf, Page 4, Section 3 "Dataset":

As discussed in Hawthorne et al. (2018), the alignment between audio and score is not fully accurate. One advantage of MusicNet is that it contains instruments other than piano ... and a wider variety of recording environments.

1 Datasets Preparation

Train/Test Split

From https://arxiv.org/pdf/1810.12247.pdf, Page 4, Section 3.2 "Dataset Splitting":

No composition should appear in more than one split.... proportions should be true globally and also within each composer. Maintaining these proportions is not always possible because some composers have too few compositions in the dataset.The validation and test splits should contain a variety of compositions. Extremely popular compositions performed by many performers should be placed in the training split.... we recommend using the splits which we have provided.

Mel-transform parameters

Don't know why not use Constant-Q transform, but from https://arxiv.org/pdf/1710.11153.pdf, Page 2, Section 3 "Model Configuration":

We use librosa ... to compute the same input data representation of mel-scaled spectrograms with log amplitude of the input raw audio with 229 logarithmically-spaced frequency bins, a hop length of 512, an FFT window of 2048, and a sample rate of 16kHz.

From https://arxiv.org/pdf/1810.12247.pdf, Page 5, Section 4 "Piano Transcription":

switched to HTK frequency spacing (Young et al., 2006) for the mel-frequency spectrogram input.

Mel-frequency values are strange:

- fmin = 30 Hz, but the first "A" note of 1st octave is 27.5 Hz

- fmax = 8 000 Hz (librosa default), and it is much higher than the last "C" note of 8th octave (4 186 Hz). So, mel-spectrogram will contain lots of high harmonics, and maybe, it will help the CNN-model correctly identify notes in the last octaves.

Maybe (don't know) Mel-scaled spectrogram is used instead of Constant-Q transform, because CQT-transform produces equal number of bins for each note, while mel-frequencies are located such that there are more nearby frequencies for higher notes. So, mel-spectrogram provides more input data for higher octaves, and the CNN-model can transcribe higher notes with better accuracy. It can help solve the issue with lots of annoying false-positive notes in high octaves.

Additional non-linear logarithmic scaling

librosa.power_to_db, ref=1 (default) --> mels decibels are approximately in range [-40 ... +40]

Note message durations: 2 consecutive frames

From https://arxiv.org/pdf/1710.11153.pdf:

Page 2, Section 2 "Dataset and Metrics":

... we first translate “sustain pedal” control changes into longer note durations. If a note is active when sustain goes on, that note will be extended until either sustain goes off or the same note is played again.

Page 3, Section 3, "Model Configuration":

... all onsets will end up spanning exactly two frames. Labeling only the frame that contains the exact beginning of the onset does not work as well because of possible mis-alignments of the audio and labels. We experimented with requiring a minimum amount of time a note had to be present in a frame before it was labeled, but found that the optimum value was to include any presence.

Number of time-frames: 625 + 1 (20 seconds at sample rate of 16 kHz)

From https://arxiv.org/pdf/1710.11153.pdf, Page 2, Section 3 "Model Configuration":

... we split the training audio into smaller files. However, when we do this splitting we do not want to cut the audio during notes because the onset detector would miss an onset while the frame detector would still need to predict the note’s presence. We found that 20 second splits allowed us to achieve a reasonable batch size during training of at least 8, while also forcing splits in only a small number of places where notes are active.

2 Training and Validation

My previous model performed well on MAPS dataset, but resulted in much lower accuracy on new larger, more natural, and more complicated MAESTRO dataset. It turned out, that just simple fully-connected network produced similar result. It probably makes sense, as it is written in https://arxiv.org/pdf/1810.12247.pdf, Page 5, Section 4 "Piano Transcription":

In general, we found that the best ways to get higher performance with the larger dataset were to make the model larger and simpler.

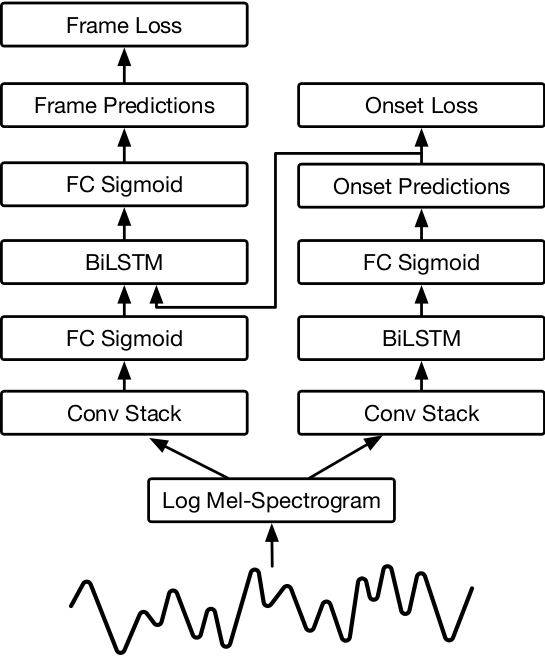

So, I based my model on Google "Onsets and Frames: Dual-Objective Piano Transcription".<br>From https://arxiv.org/pdf/1710.11153.pdf, Page 3, Figure 1 "Diagram of Network Architecture":

I blindly copied those model parameters, except:

- Batch normalization is used wherever possible (everywhere except LSTM layer and the last fully-connected layer).

- Dropout is not required at all, because there is no sign of overfitting.

Related Skills

vue-3d-experience-skill

A comprehensive learning roadmap for mastering 3D Creative Development using Vue 3, Nuxt, and TresJS.

next

A beautifully designed, floating Pomodoro timer that respects your workspace.

roadmap

A beautifully designed, floating Pomodoro timer that respects your workspace.

progress

A beautifully designed, floating Pomodoro timer that respects your workspace.